The IT industry has seen many evolutions, and it is in the midst of another major paradigm shift. Few technologies have captured more attention than big data, and there is tremendous interest in business use cases featuring big data and analytics. Gartner highlighted the top ten technologies and trends that will be strategic for most organizations in 2018. Strategic big data and actionable analytics were among these ten trends. In 2019, Gartner released its top ten IT trends again. This time, the list included mobile, Internet of Things, and smart machines. Big data and analytics become enablers - a hidden force that’s behind the scenes driving these businesses and IT innovations.

New devices and new data sources introduce new technologies. One thing that we all have learned through various paradigm shifts is that new tools rarely replace old tools. Over time, the IT landscape becomes more complex and multifaceted. As a result, big data and analytics initiatives benefit from an enterprise architecture (EA) approach to ensure alignment and success. It’s a delicate balance between strategic direction versus exploratory initiatives. The traditional EA approach tends to focus on the long-term vision, whereas Oracle applies an adaptable and flexible approach to enterprise architecture. Referred to as the Oracle Enterprise Architecture Framework (OEAF), it includes a “just enough, just in time” process for architecture development, called the Oracle Architecture Development Process (OADP), as shown in Figure 1. This methodology ensures that the appropriate guidelines are established during the planning and architectural phases.

Figure 1. OEAF and OADP

Information architecture is one of the four domains of OEAF. The focus of this layer is to manage the architecture of various data assets of an enterprise, including the types of data, the business processes they support, and the technologies, people, and information processes needed to enable the effective use of these assets. Figure 2 is a high-level view of Oracle’s Information Architecture Framework. It’s composed of data realms and management capabilities.

FIGURE 2. Oracle Information Architecture Framework

Data realms are the black slices in the middle of the diagram in Figure 2. They are the different types and classifications of data, including master data, reference data, metadata, transactions, analytical data, documents and content, and big data. The second element, which is depicted at the outside circle of this diagram, includes the capabilities you need to manage the different aspects of the varieties of these data types and classifications.

There are nine level-1 management capabilities. They include data integration, infrastructure management, data sharing and distribution, business intelligence and data warehousing, data governance and metadata management, data security, master data management, information sharing and delivery, and the enterprise data model. We’ll provide links in the appendix where you can find out more details about these capabilities, maturity model, and architecture development process.

The rationale of starting with this capability model is that it’s important to avoid looking at big data and new analytical initiatives as singular entities or endpoints. Rather, you need to find out how it fits into your existing information architecture to be able to build new capabilities incrementally and to maximize your existing investment. Figure 3 highlights the overall process to develop an analytical architecture.

FIGURE 3. Architecture development process for business analytics

The six main steps of the architecture development approach are as follows:

- Align with the business architecture.

- Define the architecture vision.

- Assess the current-state architecture.

- Establish the future-state architecture.

- Determine the strategic road map.

- Implement governance.

We will cover each of these steps next.

Align with the Business Architecture

A number of key business strategy requirements drive the needs for business analytics. Companies are investing in business analytics to enhance business competitiveness, enable fast-paced business processes, centralize business control, improve operational efficiency, support compliance and reorganizations, and facilitate mergers and acquisitions. It’s critical to understand and align with main priorities and what’s driving your organization.

The business operating model is a key element of business architecture. It is used to define the degree of business process standardization versus the degree of business process and data integration. The four types of operating models identified in Enterprise Architecture As Strategy: Creating a Foundation for Business Execution (Harvard Business Review Press, 2006) are coordinated, diversified, unified, and replicated.

It is important to understand an organization’s current- and future-state operating models in order to support analytical investment decision making and align architecture recommendations with the operational characteristics of the business. For example, a coordinated operating model might imply a higher value in an investment in analytics as a service than investment in consolidated storage hardware platforms. Similarly, a diversified operating model has low standardization and data integration. It enables maximum agility in the business. Companies moving from a replicated operating model to a unified model will require significant change in breaking down the data-sharing barrier and thus require enhanced data integration capabilities. Understanding the overall direction will help you define an analytical architecture and implement analytical solutions that enable and facilitate business transformation.

Business processes are another component of the business architecture. We are not suggesting that you develop a full-blown business architecture including a detailed business process mapping before you start any analytical initiative. However, it’s important to understand your data assets in the context of your business processes, what processes they support, how important these processes are to the overall business success, how critical these data sets are in support of these processes, and what the risks are of data breaching or the data set becoming unavailable. Developing a high-level data asset rationalization in the context of key business processes will ensure efficiency in the overall analytical solution.

Define the Architecture Vision

Principles define the guiding framework for architecture. Analytical initiatives must comply with established architectural principles in organizations, such as leveraging open standards. Here are some common guiding principles with regard to analytical architecture.

Alignment with Overall Information Architecture

The first guiding principle is the need to align with the overall information architecture.

Statement The analytical solution needs to be in alignment with the enterprise information architecture.

Rationale The value of big data is maximized when it can be correlated with existing enterprise information and business processes.

Implications Here are the implications:

- Appropriate technology, process, and people are needed to correlate and integrate big data analytic results with enterprise information, applications, and business processes.

- Conduct high-level data and technology rationalization in the overall information architecture to avoid big data and analytical silos.

Approachable Analytics

Our second guiding principle is around analytics.

Statement Information and analysis must be available to all users, processes, and applications across the organization that can benefit from it. The reach of decision-making analysis must expand to include all knowledge workers in the organization and the applications they use.

Rationale More than 50 percent of users do not use a BI solution today because of a perceived complexity and lack of flexibility. The new analytical solution needs to attend to users’ skill level and information needs for their specific roles.

Implications Here are the implications:

- The analytical solution needs to provide multi-user and multi-usage type support. The architecture must provide the ability to work with different forms of data using query, manipulation, and rendering techniques that are appropriate for the task. It must also support various forms of analysis such as OLAP, statistical analysis, and sentiment analysis.

- Analytical solution needs to enable more user self-service to remove IT bottleneck.

- Analysis should be integrated into application user interfaces, devices, and processes such that users gain insight where and when they need it.

- Analytical systems should be integrated with business processes in a way to automatically leverage the available information to optimize operational processes.

- Analytics should be available as a shared service to promote its use.

- The architecture must enable end users who are not familiar with data structures and analytical tools to view information pertinent to their needs.

Continuous Availability

The third guiding principle is regarding the availability of the solution.

Statement The analytical solution needs to provide continuous availability to users where and when they need it.

Rationale Analytical solutions are most effective when they become embedded within critical business processes. As the analytical culture continues to mature for an organization, these solutions will increasingly become tier-1 applications that dictate minimal or no downtime.

Implications Here are the implications:

- Analytical solutions need to be designed with high availability and disaster recovery capabilities in mind.

- The analytical architecture needs to incorporate elastic scalability to account for increasing data volume and model frequency without performance degradation.

Data Storage Agnostic

The fourth guiding principle attends to the data storage approach.

Statement The analytical architecture needs to encapsulate the storage layer complexity and diversity from the data and information consumers.

Rationale Data acquisition will come in various forms. Consumption-layer architecture should avoid introducing new data or analysis processing with a new set of tools and technologies, particularly if doing so produces new silos of data and analysis artifacts that cannot integrate easily and properly.

Implications Here are the implications:

- The data consumption architecture components should be capable of handling different types of data and different forms of analysis processing.

- Virtualization capabilities are required to establish uniformed data access across data stores.

Shared Metadata

The fifth principle speaks to the approach to metadata management.

Statement The analytical architecture must provide the ability to define and share metadata across various analytical tools.

Rationale Information can have different meanings to different people and lines of businesses. Standardizing and sharing metadata at the enterprise level improves the accuracy of analysis and reduces the cost of reconciliation.

Implication Here’s the implication:

- The analytical architecture must provide a way to catalog and define analytical artifacts, including predictive models, formulas, calculations, aggregation, cross-references, graphs, tables, charts, dashboards, and reports.

Actionable Insights

The sixth guiding principle is regarding the focus of the insights from the analytical solutions.

Statement The analytical solution needs to be capable of initiating actions automatically or through human intervention, based on insights obtained from the analysis.

Rationale Analysis is most effective when appropriate action can be taken at the time of the insight and discovery.

Implications Here are the implications:

- The architecture must support predictive analysis in addition to the traditional descriptive analytics.

- The system must provide functionalities of alerts and event subscription.

Context-Based Governance

The seventh guiding principle focuses on the evolution of data governance.

Statement Data governance needs to be redefined to focus on the challenges introduced by these new classes of data, technologies, and analytical solutions.

Rationale We are seeing a continued move to machine learning and decision automation to keep pace with speed and the volume of data. As companies strive to operationalize analytics, they need to focus more on the optimal mix between human and machine capability and judgment.

Implications Here are the implications:

- Proper governance needs to be in place to facilitate the move to automated decision making and to determine when human intervention/interpretation is required based on the analytical model quality and level of confidence.

- The analytical solution needs to be capable of synthesizing analytical models to establish and measure analytical quality.

- The initial data exploration requires a looser governance model to maximize agility. However, as valuable data is uncovered and becomes incorporated into standard business processes, these same data assets need to follow the same governance standards at the enterprise level.

- The analytical architecture needs to incorporate data life-cycle management. Data archiving also becomes more important to avoid stale data sets plaguing analytical results and to ensure retention requirements are met.

Balanced Access and Security Control

The eighth principle speaks to the changing landscape of security management.

Statement While pervasive information access is the overall goal, it’s important not to lose sight of security control.

Rationale New challenges arise in security with the introduction of a large amount of data, especially those originating from machine-to-machine devices with no endpoint control, often referred to as the Internet of Things. There is also more complexity in access control around a multitude of mobile devices because bring-your-own-device (BYOD) is seeing wide adoption in enterprises.

Implications Here are the implications:

- The information architecture effort needs to involve stratifying data assets based on security requirements and sensitivity, identifying highly sensitive data, and applying tight control through defense-in-depth, a multilayered security architecture.

- The architecture needs to provide forensic analysis capabilities to detect, report, and prevent uncharacteristic usage and access.

Assess the Current-State Architecture

Loosely speaking, an architecture for an IT system describes the various components of the system, the inputs and outputs of each component, and how the system interfaces to the other systems that it interacts with. We have been working with CIOs, CTOs, and chief architects of various organizations for quite some time on information architecture and big data analytics initiatives. It’s common to hear this comment: “Our business wants to pursue big data solutions, but our information landscape is a mess.” The biggest pain point we have encountered in many organizations is the barrier to data sharing. It’s a result of numerous challenges within any organization; some are cultural in nature, some are related to existing processes and organization boundaries, and others are a result of siloed applications and inconsistent data definitions. Common symptoms of information silos include lack of data dimension, multiple reporting repositories, lack of insight into unstructured data, and proliferation of isolated departmental data marts. They are often the cause of poor user satisfaction and subpar business intelligence adoption.

Oracle’s Architecture Development Process (OADP), as shown in Figures 1 and 3, focuses on “just-in-time” and “just-enough” architecture, especially when it comes to current-state assessment. We do not recommend a full-blown, “boil-the-ocean” current-state analysis, which prolongs the solution timeline with little value yielded. Instead, we recommend that enterprises take a top-down approach, getting a high-level architecture overview of the current-state capabilities based on the information architecture capability model we mentioned earlier and identifying key pain points and main obstacles that are prohibiting the adoption of new analytical solutions.

How do you know what is enough current-state information? The answer is simple. It’s enough as long as you can determine the gap between the current-state and future-state vision, and it’s sufficient enough as long as you have the information needed to develop a strategic road map for actionable recommendations. This means you take an iterative approach while developing the current state, the future state, and the road map, by taking a high-level baseline of relevant capabilities, and then drill into specific architecture components and business domains based on the priorities and dependencies.

Establish the Future-State Architecture

Establishing the future-state analytical architecture implies putting together a blueprint. Not having it would be similar to building a home without a blueprint. In other words, there is a greater potential for failure without a sound architecture. An analytical architecture includes not just the blueprint of different components and how they fit together, but also the standards, processes, applications, and infrastructure.

New Capabilities and Requirements

The high-level requirements of this new analytical platform are as follows:

- More types of data and storage platforms This is key to embracing the unstructured and schema-free data types found in most big data. Once these new data types are addressed, an organization obtains greater business value from big data and broader and more agile data sourcing for analytics. The new storage platform includes all data for analytics, including data warehouse appliances, columnar databases, and HDFS. The low cost of the HDFS platform for historical data retention and parallel processing of more data and unstructured data holds the promise of expanding analytical capabilities to the next level.

- Information exploration and discovery capability The types of analytics currently on the rise (based on technologies for SQL, NoSQL, mining, statistics, and natural language processing) are all related to discovering facts about the business that were previously unknown.

- Real time Most enterprises are expecting real-time capabilities from analytical solutions to support fast business processes and decision making. Traditional relational databases and batch-oriented Hadoop systems were not built for real-time operations. Real-time functions include Apache Storm on Hadoop, Cloudera Impala, and Event Processing Engine.

Hybrid Data and Storage Layer

With these new requirements, it’s important to plug new capabilities into a holistic architecture vision. Let’s first look at the data storage and processing layer.

No discussion about data architecture can occur without covering data warehouse architecture. Most organizations have data located across a large number of heterogeneous data sources. Analysts spend more time finding, gathering, and processing data than analyzing it. Data quality, access, and security are key issues for most organizations. The most common challenge analysts face is data collection across multiple systems and then cleaning, harmonizing, and integrating it. It is estimated that 80 percent of the time analysts spend is around data preparation. It’s hardly a good use of such hard-to-find and valuable resources.

According to The Data Warehouse Institute (TDWI), the biggest trend in data warehouse architecture right now is the movement toward more diversified data platforms and related tools within the physical layer of the extended data warehouse environment. While there’s a clear benefit to a centralized physical data warehousing strategy, more and more organizations are looking to establish a logical data warehouse, which is composed of a data hub or reservoir that combines different databases (relational or NoSQL) and HDFS. Capabilities in data federations are needed, including canonical data model, schema mapping, conflict resolution, and autonomy policy enforcement. Success comes from a solid logical design based mostly on business structures. In our experience, the best success occurs when the logical design is based primarily on the business - how it’s organized, its key business processes, and how the business defines prominent entities such as customers, products, and financials.

In reality, most architecture evolves into a hybrid state, despite standards, plans, and preferences. Centralized and distributed architectures are rarely 100 percent even though that might be the initial objective.

The key is to architect a multiplatform data environment without being overwhelmed by its complexity, which a good architectural design can avoid. Hence, for many user organizations, a multiplatform physical plan coupled with a cross-platform logical design is a new data platform and architecture that’s conducive to the new age of big data and analytical solutions.

Here are some guidelines for building this hybrid platform:

- First define logical layering and organize and model your data asset according to purpose, business domain, and existing implementation.

- Integrate between technologies and layers so both IT-based and user-based efforts are supported.

- Consolidate where it makes sense and virtualize access as best as possible so that you can reduce the number of physical deployments and operational complexity.

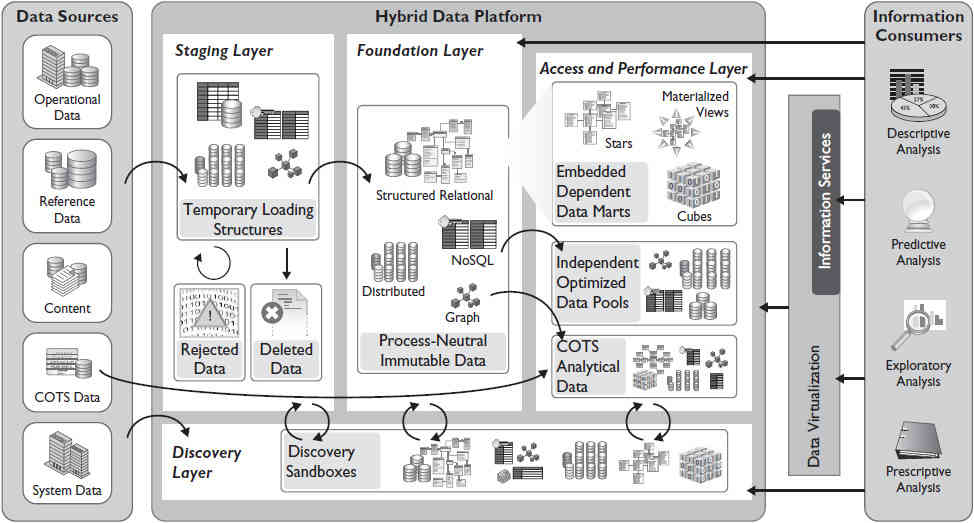

Figure 4 is a sample reference architecture for this hybrid data platform from Oracle’s architecture repository.

FIGURE 4. Hybrid data platform reference architecture

Here are the main layers of a hybrid data platform:

- Staging layer This is a landing pad for data quality management, the initial cleansing, mapping, standardization, and conflict resolution.

- Foundation layer This focuses on historic data management at the atomic level. Process-neutral, this layer represents an enterprise data model with a holistic vision.

- Access and performance layer This is also known as an analytical layer, with business/functional-specific models, snapshots, aggregations, and summaries.

- Discovery layer This is where data exploration occurs with a significant amount of user autonomy.

It takes careful consideration to decide on the placement of data assets into each of the layers. Factors to consider include data subject area, level of grain (level of data detail and granularity), and level of time relevance (whether it’s operational or historic). The focus of consideration needs to be on data and information consumption. These layers will correspond to different types of data storage and retrieval mechanisms with the most effective integration capabilities, performance characteristics, and security measures in place.

Data and Analytics as a Service

A solid data hub is the first piece of the architecture design. However, the architects’ work doesn’t stop there. Now let’s look at how to deliver data and analytics as a service. Figure 5 shows a data-as-a-service blueprint. Through integrated data and shared services, the main objectives of data and analytics as services are to provide data interoperability, integrated analytics, and an agile data platform that allows you to establish quick time-to-value capabilities but also enables you to continue to embrace open-source innovations.

FIGURE 5. Blueprint for data and analytics as a service

The top layer in Figure 5 represents an end-to-end big data life-cycle process, described in detail as follows:

- Acquisition As various data is captured, it can be stored and processed in traditional RDBMSs, flat files, HDFS, NoSQL databases, or a streaming event model.

- Organization Architecturally, one of the critical components that links big data to the rest of the data realms is the organize layer. This layer needs to extend across all of the data types and domains and bridge the gap between the traditional and new data acquisition and processing framework.

- Analysis The analytical layer contains advanced analytics capabilities such as various data mining and predictive analytics algorithms, spatial and text analytics, and the event processing engine to analyze streamed data in real time.

- Consumption The BI layer will be equipped with information exploration, discovery, and advanced visualization, on top of traditional BI components such as reports, dashboards, queries, and scorecards.

- Governance and security This covers the entire spectrum of the data and information landscape at the enterprise level.

As mentioned earlier, recent analyst predictions call for the need to build smaller and nimble analytics capabilities. This is where service-oriented architecture plays a key role. Let’s look at the service layers in more detail in the context of these life-cycle capabilities.

Acquisition

There are high-level categories of services in this stage:

- Data movement services for bulk and incremental movement and replication services

- Data processing services for batch and real-time as well as stream processing

- Storage information life-cycle management (ILM) services for archiving and retention management

Organization

IDC predicts the cohabitation of traditional database technology (RDBMS) with the newer Hadoop ecosystem and NoSQL databases, concluding that in the short term, information management will become more complex for most organizations.

Various organization services play a critical role in reducing this complexity. Categories of services include

- Data enrichment services

- Data aggregation services

- Data joining and merging services

- Data model services for canonical data model, schema mapping, and conflict resolution

- Integrated metadata services, critical to providing a common language for data residing across different data storage models

Analysis

Let’s move on to analytics as a service. Event correlation services are getting more and more mainstream for smart grid, fraud, healthcare, intelligence for security, cyber, financial, and public safety use cases. Other categories of analysis services include data mining, predictive analytics, spatial, and text. Typical data mining services will expose algorithms to perform the following:

- Classification

- Pattern matching

- Anomaly detection

- Attribute importance

- Association rules

- Clustering

On the predictive analytics services side, there are algorithms for the following:

- Regression

- Time series analysis

- Case-based reasoning

- Neural networks

- Multilayer perceptron (MLP)

Consumption

The BI infrastructure can be exposed to provide reports, graphs, and advanced visualization as a service. Content search, publishing, and subscription capabilities can also be exposed as services.

- Various consumption services include the traditional query and reports, distribution, and alerting services.

- More advanced consumption services have surfaced recently. One example is services to provide federated searching, automatic clustering of data, data taxonomy, and context-based faceted search.

- Another example is a virtual documents service that dynamically builds a consolidated view based also on the access and interest point.

- There are also new types of exploration and visualization services that combine tabular, tag cloud, guided navigation, maps, charts, and statistical visualization packages (from R, for example) to send striking messages and discern data relationships that simple tables and numbers cannot provide.

Security

There are four areas of security in an analytical architecture.

- Perimeter security This includes guarding access to the cluster through network security, firewalls, and, ultimately, authentication to confirm user identities.

- Data security This includes protecting the data in the cluster from unauthorized visibility through masking and encryption, both at rest and in transit.

- Access security This includes defining what authenticated users and applications can do with the data in the cluster through file system ACLs and fine-grained authorization.

- Visibility This includes reporting on the origins of data and on data usage through centralized auditing and lineage capabilities.

The security infrastructure can be exposed to perform authentication, access control, auditing, encryption and decryption, and so on, as a service. Security services include two main categories: services to ensure the level of data access such as authentication, authorization, and auditing (the most famous AAA security that we often discuss); and services to provide data protection, such as encryption, masking, and redaction.

In short, the purpose of this blueprint is to further your understanding of a big data vision, help you prioritize the capability requirements and gaps, and establish a road map to incrementally build up these capabilities in a holistic and thoughtful manner. Figure 6 shows a sample unified information architecture.

FIGURE 6. Unified information architecture

Determine the Strategic Road Map

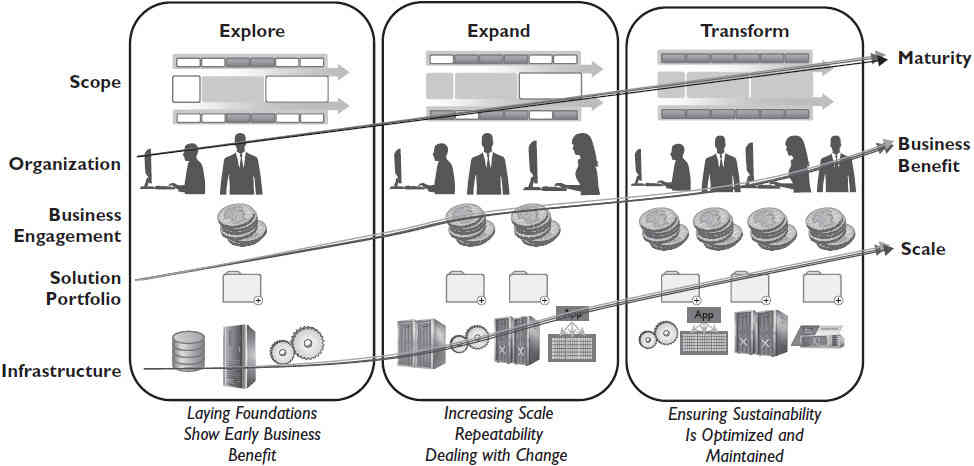

Companies vary in their level of analytical maturity, but most want to gain a competitive advantage using data-driven decision making. Enterprises are trying to improve productivity, increase revenue, reduce costs, and manage risk and uncertainty in decision making. The potential benefits warrant attention, and companies are making investments to improve their analytic strength.Figure 7 contains an overview of an incremental approach to improving business analytical capabilities over time.

FIGURE 7. An incremental approach to analytical maturity

The three stages are Explore, Expand, and Transform.

In the Explore stage, most common activities include use case development and prioritization, proof of concept with a few use cases, building of a small Hadoop cluster or NoSQL database to supplement the existing data warehouse, and initiation of data discovery capabilities to show early business benefit. The key is to take small steps and be aware of the overall future state architecture, focus on quick time to market, and allow for continued adoption of open-source architecture and capabilities.

The Expand phase is oriented toward increasing scale and repeatability. More data sources are introduced that require expansion of the hybrid data platform. Integration among these components (between RDBMS and HDFS, for example) is a critical capability to ensure success. Data discovery becomes enterprise-scale and mainstream. This is the phase when data and analytics as a service will mature.

The Transform phase focuses on innovative solutions powered by this agile data platform and analytical services established and matured through the first two stages. With this solid foundation, the enterprise is fully prepared to adopt cutting-edge solutions and embrace new analytical trends, which we will discuss in the “What’s Coming Next?” section.

The key decisions are where to invest first in terms of business area and components and how to build up these capabilities over time once a future-state architecture is determined.

The rule of thumb is to choose a business area that is mature for success based on business priority, perceived value and return of investment, availability of required historic data sources to produce meaningful insights, and adequate skill levels of analysts.

Implement Governance

As Boorstin pointed out, the biggest threat to knowledge is the illusion of knowledge. People tend to be more cautious when they know what they don’t know. With the rise of machine learning, data mining, and other advanced analytics, IDC predicts that “decision and automation solutions, utilizing a mix of cognitive computing, rules management, analytics, biometrics, rich media recognition software, and commercialized high-performance computing infrastructure will proliferate.”

So, data governance, in the context of big data, will need to focus on determining when automated decisions are appropriate and when human intervention and interpretation are required.

Controls to synthesize analytical models and to establish and measure analytical quality will also emerge to provide effective governance for business decision making.

Data quality continues to be relevant for analytical capabilities. But quality standards need to be based on the nature of consumption. For example, financial reporting requires clean and carefully modeled data, whereas raw data is usually appropriate for exploratory analytics.

In short, the focus and approach for data governance need to be relevant and adaptive to the data types in question and the nature of information consumption.

People and Skills

A “skills gap” is the largest barrier to success with new data and analytical platforms. According to TDWI, the majority of the organizations investing in business analytics intend to upgrade their analytical strengths by improving the skills of existing staff and hiring new analytical talent. Various sources, including the U.S. Bureau of Labor Statistics, U.S. Census, Dun & Bradstreet, and McKinsey Global Institute, indicate that by 2018 the demand for deep analytical talent in the United States could be 50 to 60 percent greater than the supply.

Success with business analytics requires more than just technology. Organizations must upgrade their business and technical analytical skills to make full use of the available technology and to apply the results of analytics to the appropriate business opportunities.

To accelerate an organization’s analytical maturity, a center of excellence is beneficial. A center of excellence is a cross-functional team of analytic and domain specialists who plan and prioritize analytics initiatives, manage and support those initiatives, and promote broader use of information and analytics best practices throughout the enterprise. Although it will not have direct ownership of all aspects of the analytics framework, an analytics center of excellence will provide oversight, guidance, and coordination for technology, process, data stewardship, and the overall analytics program - both from an infrastructure and support perspective and a governance perspective. Another benefit is that it could also produce significant value by finding out where IT, domain, and analytical resources exist and making the best use of them.

Because an analytics center of excellence mobilizes analytic resources for the good of the organization, not just for specific business units or one-off projects, it ultimately changes the culture of the organization to appreciate the value of analytics-driven decisions and continuous learning.