As defined in this article, a cluster is a group of interconnected nodes that acts like a single large server capable of growing and shrinking on demand. In other words, clustering can be viewed logically as a method for enabling multiple standalone servers to work together as a coordinated unit called a cluster. The servers participating in the cluster must be homogenous - that is, they must use the same platform architecture, operating system, and almost identical hardware architecture and software patch levels - as well as independent machines that respond to the same requests from a pool of client requests.

As defined in this article, a cluster is a group of interconnected nodes that acts like a single large server capable of growing and shrinking on demand. In other words, clustering can be viewed logically as a method for enabling multiple standalone servers to work together as a coordinated unit called a cluster. The servers participating in the cluster must be homogenous - that is, they must use the same platform architecture, operating system, and almost identical hardware architecture and software patch levels - as well as independent machines that respond to the same requests from a pool of client requests.

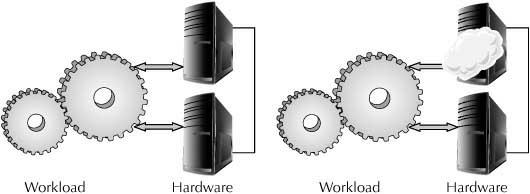

From another perspective, clustering can also be viewed as host virtualization in the modern cloud computing architectures - the end-user applications do not specifically connect to “a server,” but rather connect to a logical server that is internally a group of physical servers. The clustering layer wraps the underlying physical servers and only the logical layers are exposed externally, thus offering the simplicity of a single node to the outside world.

Traditionally, clustering has been used to scale up systems, to speed up systems, and to survive failures.

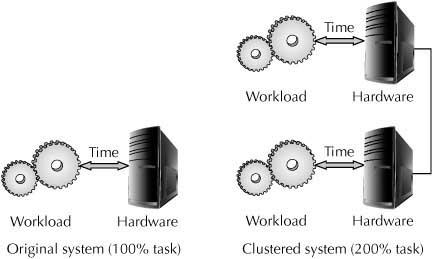

Scaling is achieved by adding extra nodes to the cluster group, thus enabling the cluster to handle progressively larger workloads. Clustering provides horizontal on-demand scalability without incurring any downtime for reconfiguration.

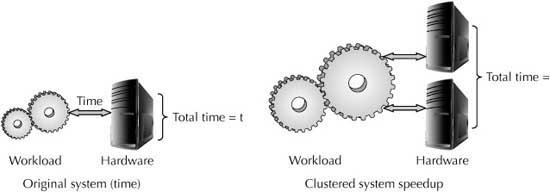

Speeding up is accomplished by splitting a large workload into multiple smaller workloads and running them in parallel in all the available CPUs. Parallel processing provides massive improvement in performance for jobs that can be run in parallel. The simple “divide and conquer” approach is applied to the large workloads, and parallel processing uses the power of all the resources to get work done faster.

Because a cluster is a group of independent hardware nodes, failure in one node does not halt the application from functioning on the other nodes. The application and the services are seamlessly transferred to the surviving nodes, and with proper design the application continues to function normally as it was functioning before the node failure. In some cases, the application or the user process may not even be aware of such failures, because the failover to the other node is transparent to the application.

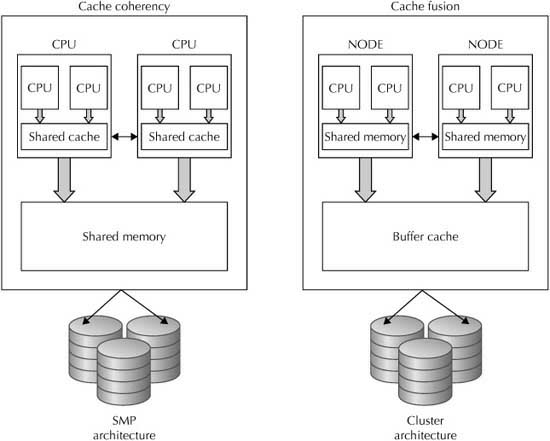

When a uniprocessor system reaches its processing limit, it imposes a big threat to scalability. Symmetric multiprocessing (SMP) - the use of multiple processors (CPUs) that share memory (RAM) within a single computer to provide increased processing capability—solves this problem. SMP machines achieve high performance by parallelism, in which the processing job is split up and run on the available CPUs. Higher scalability is achieved by adding more CPUs and memory.

Figure 1 compares the basic architectural similarities between symmetric multiprocessing and clustering. However, the two architectures maintain cache coherency at totally different levels and latencies.

FIGURE 1 SMP vs. Clusters

NOTE

Uniprocessors aren’t used any longer. Also, newer technologies such as InfiniBand and Silicon Photonics from Intel make for faster network connectivity, and other technologies such as Oracle Data Connectors with Hadoop, Oracle Big Data Appliance, R, and Big Data SQL all support parallelism.