In this blog, we cover the components that make up a typical database infrastructure. This article is introductory: this blog that follow provide more detail about designing, implementing, and provisioning databases.

In this blog, we cover the components that make up a typical database infrastructure. This article is introductory: this blog that follow provide more detail about designing, implementing, and provisioning databases.

Although Microsoft SQL Server is new to Linux, Microsoft has, as much as possible, crafted it to work the same way that it does on Windows. We highlight places where there are differences.

No matter which configurations you end up using, there are four basic parts to a database infrastructure:

- Memory

- Processor

- Permanent storage

- Network

We also touch on a couple of high availability offerings, including improvements to availability groups in SQL Server 2017. We then look at an introduction to security concepts, including ways to access instances of SQL Server on-premises with Windows and Linux, and Microsoft Azure SQL Database. Finally, we take a brief look at virtualization.

Memory

SQL Server 2017 is designed to use as much memory as it needs, and as much as you give it. By default, the upper limit of memory that SQL Server can access, is limited only by the physical Random Access Memory (RAM) available to the server, or the edition of SQL Server you’re running, whichever is lower.

Understanding the working set

The physical memory made available to SQL Server by the operating system (OS), is called the working set. This working set is broken up into several sections by the SQL Server memory manager, the two largest and most important ones being the buffer pool and the procedure cache (also known as the plan cache).

In the strictest sense, “working set” applies only to physical memory. However, as we will see shortly, the buffer pool extension blurs the lines.

Caching data in the buffer pool

For best performance, you cache data in memory because it’s much faster to access data directly from memory than storage.

The buffer pool is an in-memory cache of 8-KB data pages that are copies of pages in the database file. Initially the copy in the buffer pool is identical, but changes to data are applied to this buffer pool copy (and the transaction log) and then asynchronously applied to the data file.

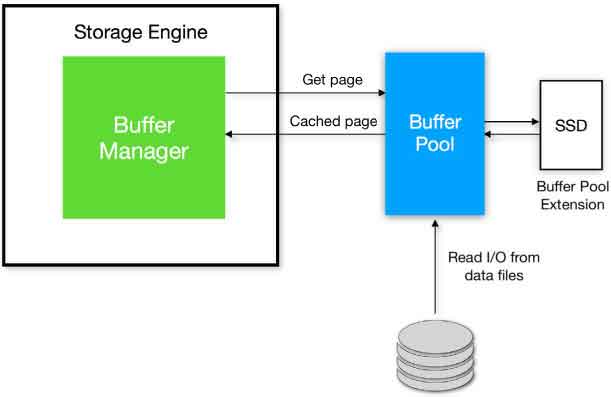

When you run a query, the Database Engine requests the data page it needs from the Buffer Manager, as depicted in Figure 1. If the data is not already in the buffer pool, a page fault occurs (an OS feature that informs the application that the page isn’t in memory). The Buffer Manager fetches the data from the storage subsystem and writes it to the buffer pool. When the data is in the buffer pool, the query continues.

Figure 1 The buffer pool and the buffer pool extension.

The buffer pool is usually the largest consumer of the working set because that’s where your data is. If the amount of data requested for a query exceeds the capacity of the buffer pool, the data pages will spill to a drive, either using the buffer pool extension or a portion of TempDB.

The buffer pool extension makes use of nonvolatile storage to extend the size of the buffer pool. It effectively increases the database working set, forming a bridge between the storage layer where the data files are located and the buffer pool in physical memory.

For performance reasons, this should be solid-state storage, directly attached to the server.

Caching plans in the procedure cache

Generally speaking, the procedure cache is smaller than the buffer pool. When you run a query, the Query Optimizer compiles a query plan to explain to the Database Engine exactly how to run the query. To save time, it keeps a copy of that query plan so that it doesn’t need to compile the plan each time the query runs. It is not quite as simple as this, of course (plans can be removed, and trivial plans are not cached, for instance), but it’s enough to give you a basic understanding.

The procedure cache is split into various cache stores by the memory manager, and it’s also here where you can see if there are single-use query plans that are polluting memory.

For more information about cached execution plans, visit microsoft blog.

Lock pages in memory

Turning on the Lock pages in memory (LPIM) policy means that Windows will not be able to trim (reduce) SQL Server’s working set.

Locking pages in memory ensures that Windows memory pressure cannot rob SQL Server of resources or shunt SQL Server memory into the Windows Server system page file, dramatically reducing performance. Windows doesn’t “steal” memory from SQL Server flippantly; it is done in response to memory pressure on the Windows Server. Indeed, all applications can have their memory affected by pressure from Windows.

On the other hand, without the ability to relieve pressure from other applications’ memory demands or a virtual host’s memory demands, LPIM means that Windows cannot deploy enough memory to remain stable. Because of this concern, LPIM cannot be the only method to use to protect SQL Server’s memory allocation.

The controversy of the topic is stability versus performance, in which the latter was especially apparent on systems with limited memory resources and older operating systems. On larger servers with operating systems since Windows Server 2008, and especially virtualized systems, there is a smaller but nonzero need for this policy to insulate SQL Server from memory pressure.

The prevailing wisdom is that the LPIM policy should be turned on by default for SQL Server 2017, provided the following:

- The server is physical, not virtual.

- Physical RAM exceeds 16 GB (the OS needs a working set of its own).

- Max Server Memory has been set appropriately (SQL Server can’t use everything it sees).

- The Memory\Available Mbytes performance counter is monitored regularly (to keep some memory free).

If you would like to read more, Jonathan Kehayias explains this thinking in a Simple Talk article.

Editions and memory limits

Since SQL Server 2016 Service Pack 1, many Enterprise edition features have found their way into the lower editions. Ostensibly, this was done to allow software developers to have far more code that works across all editions of the product.

Although some features are still limited by edition (high availability, for instance), features such as Columnstore and In-Memory OLTP are turned on in every edition, including Express. However, only Enterprise edition can use all available physical RAM for these features. Other editions are limited.

In-Memory OLTP considerations