In this blog we will cover the Endeca Integrator Acquisition System, or Endeca IAS, a tool that has two major functions. Its primary function is to crawl source data stored in a variety of formats, including file systems, delimited files, and web servers. (You can find a complete list of supported file formats in the appendix.) Its other function is to store this data in a data repository known as a record store and make this data available to Integrator ETL through a web service.

In this blog we will cover the Endeca Integrator Acquisition System, or Endeca IAS, a tool that has two major functions. Its primary function is to crawl source data stored in a variety of formats, including file systems, delimited files, and web servers. (You can find a complete list of supported file formats in the appendix.) Its other function is to store this data in a data repository known as a record store and make this data available to Integrator ETL through a web service.

The goal of this blog is to introduce Endeca IAS and its capabilities and provide you with sufficient information to decide what place Endeca IAS has in your organization’s enterprise-based data exploration. Endeca IAS is a tool whose interface is the command line and lacks any graphical user interface. When selecting this tool, you should be certain that those who will use Endeca IAS have sufficient skills to use a command-line interface tool. Database administrators (DBAs) or systems administrators possess these skills, along with developers who typically work in UNIX or Linux. The files used to configure Endeca IAS are XML files and text files located on the server hosting Endeca IAS. These files can be edited on the server with the vi editor or can be edited on a workstation, and then they are transferred to the server.

Endeca IAS Crawls

Crawl is the name given to the data collection activity of Endeca IAS, and it applies to data collected from all sources. There are two basic types of crawls. The first type consists of crawls of delimited files, file systems, and JDBC data sources, which we will refer to as local crawls. The second type consists of crawls of entities that are accessed by HTTP, which are primarily web content but can consist of other types of content stored in files. There are two sets of documentation provided by Oracle on crawls. “Integrator Acquisition System Developers Guide” covers local crawls, and “Integrator Acquisition System Web Crawler Guide” covers web crawls.

Local crawls are managed by the command-line utility ias-cmd, which provides the ability to create, run, and delete crawls, as well as perform nearly every type of management task related to crawls. Local crawls must be configured to address considerations specific to the file type to be crawled. For example, for a crawl of the archive file types JAR and ZIP, options must be selected to expand the archives. Note that Endeca IAS can only crawl file systems that are mounted to the Endeca IAS server, meaning only file systems on storage local to the server or file systems on shared network drives.

Web crawls have a special shell script used to run crawls, called web-crawler.sh. Web crawls have the ability to navigate deeper and deeper into a web site. The depth of web crawls is governed by the numeric parameter -d for the web-crawler.sh script. Web crawls are configured to be polite or nonpolite. Web crawlers are capable of retrieving data much faster than humans accessing a web site with a web browser, so they can have a crippling impact on the performance of a site. To avoid this, a polite web crawl waits 1 second between web requests to a domain. A nonpolite web crawl has no delay.

Both local crawls and web crawls can be either full or incremental. A full crawl captures all content specified, and an incremental crawl captures only content that has changed since a previous crawl.

The data from a crawl can be output to XML files or to record stores. Output to XML files is useful for debugging and provides some capabilities for interfacing to anything that can consume XML.

Endeca IAS Record Store

Data collected by Endeca IAS is stored in a record store. A record store is a staging database that resides on the same server as Endeca IAS and makes the content acquired through crawls available to Endeca ETL through web services. Record store maintenance and management is covered extensively in “Integrator Acquisition System Developers Guide.” The record store management utilities provide a limited set of command-line utilities for monitoring and maintenance and do not provide any direct access to the data stored. Data can be extracted from record stores into the XML format with the recordstore read-baseline command.

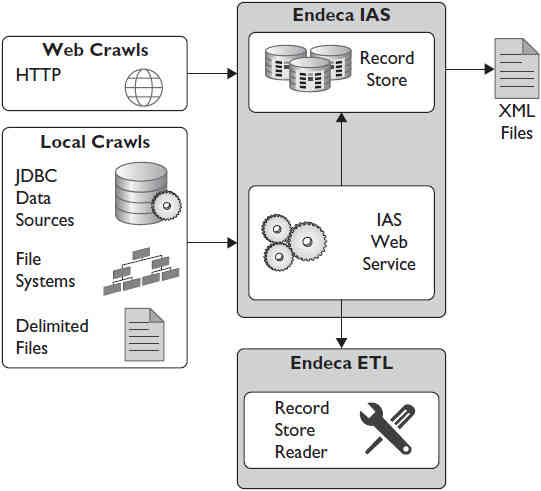

Figure 1 shows the major components and features of Endeca IAS. Local crawls, regardless of data type acquired, all use the same command-line tool, whereas web crawls have a command-line tool just for web crawls.

FIGURE 1. Endeca IAS taxonomy

Example Endeca IAS Crawl

Because of the volume of data on the Internet, we will cover how to collect web data through web crawls instead of demonstrating a local crawl. Local crawls do not differ greatly from web crawls, so the steps to run a web crawl are nearly the same as those for a local crawl.

In this blog we covered public data on bank failures from the web site for the Federal Deposit Insurance Corporation (FDIC). For an Endeca IAS example, we will show how to crawl the FDIC press releases for 1994–2013. The configuration for a crawl requires a seed, which is a list of URLs to be crawled. The example seed consists of a list of the URLs for FDIC press releases. The URLs follow a simple pattern, and the seed file is stored in a file called FDICALL.lst.

The file starts with these lines:

The file ends with these last two lines:

![]()

Let’s examine the commands needed to execute the web crawl. These are all entered on the Linux command line, and we are showing these commands to emphasize the relative ease of performing a web crawl. You must create a record store to hold the output of the web crawl. Call this record store FDICALL.

![]()

The file site.xml stores settings for the crawl, and this file must be modified to reflect the name of the record store. No additional modifications to site.xml or any other file are required to run a web crawl. The actual command to run the crawl is as follows:

![]()

This simple command runs the web crawl at a depth of 1. As the web crawl runs, status messages are returned. Since you are writing to a record store, you never actually see the data collected. For debugging purposes, you could have sent the data collected to an XML file for review. You will not be able to examine the data in this example until bringing the data into Endeca ETL.

The crawl depth, as set by the -d flag, sets how many levels of page links will be followed. A URL in the seed has a level of 0, and each link from a seed URL has a level of 1. Links from a level 1 URL have a level of 2, and so on. Setting the depth to a higher number like 4 or more increases the scope and duration of a web crawl, as well as the amount of data acquired by the crawl.

When the crawl completes, the status messages show the successful completion of the crawl:

The data from the crawl is now in the record store FDICALL. You cannot view it until you get into Endeca ETL. The level of effort required to conduct this crawl was minimal, and after a short time learning about Endeca IAS, most IT organizations could quickly put it into service.

Endeca IAS Installation and Operations

Now we will provide insight regarding the installation of Endeca IAS not covered in Oracle’s official installation documentation. Oracle provides an installation guide specifically for Endeca IAS called “Oracle Endeca Information Discovery Integrator: Integrator Acquisition System Installation Guide.” It covers in depth the installation of Endeca IAS. Red Hat and Oracle Linux are supported, as is Microsoft Windows 2008 Server. Only Intel 64-bit x86 processors are supported. Two application servers are supported for Endeca IAS: Oracle WebLogic and Jetty Web Server. We recommend Oracle Enterprise Linux 6 for the operating system and Jetty for the application server because of the overall better experience of running Endeca IAS on Jetty. However, we will provide insight on both the WebLogic and Jetty installations.

Oracle WebLogic 11g, version 10.3.6, is required for Endeca IAS. Oracle WebLogic is used for many other components of Oracle Endeca, but be aware that the installation of WebLogic for Endeca IAS differs from the installation of WebLogic for other Endeca components. During the installation of WebLogic, you are prompted to select Products and Components, and for all other Endeca components, this consists of Core Application Server, Administration Console, and Configuration Wizard and Framework. Endeca IAS requires one additional component, the Evaluation Database, as shown in Figure 2. If you have a test environment where a single WebLogic installation supports both Endeca Studio and Endeca Server, it would not be possible, so use this environment for Endeca IAS because of this additional requirement.

FIGURE 2. WebLogic installation options for Endeca IAS

Jetty is an open-source, Java-based HTTP or web server and Java servlet container, and is usually used for machine-to-machine interactions instead of serving HTML pages to humans. Jetty has many high-profile deployments and is currently used with Apache Hadoop for some operations. Jetty requires Java, and the version of Java installed on your server will determine the version of Jetty that can be used. Java 6 is used for many of the other Endeca components covered in this blog, and if you stick with this version of Java, then you must use Jetty version 8. The Eclipse Foundation, the same organization associated with the development environment used for Integrator ETL, maintains Jetty, and Jetty is available for download from its web site. Installing Jetty involves unzipping a ZIP file in a directory of your choice. The directory where Jetty is installed is referred to as JETTY_HOME. To start Jetty, navigate to JETTY_HOME, and type the following:

![]()

You can check the status of Jetty from a web browser on the server where Jetty is running by accessing the status page on port 8080. This is the default page used for Jetty, as shown in Figure 3.

FIGURE 3. Jetty application status page

Once you have either WebLogic or Jetty installed, you can install Endeca IAS. Endeca IAS is installed with a single Linux shell script that prompts for the application server, ports, and other details. This single script is unusual because it contains the encoded data used for every part of the Endeca IAS installation.

When the installation script completes, the service used for Endeca IAS can be started. For Jetty, this is started from the command line with the following command:

![]()

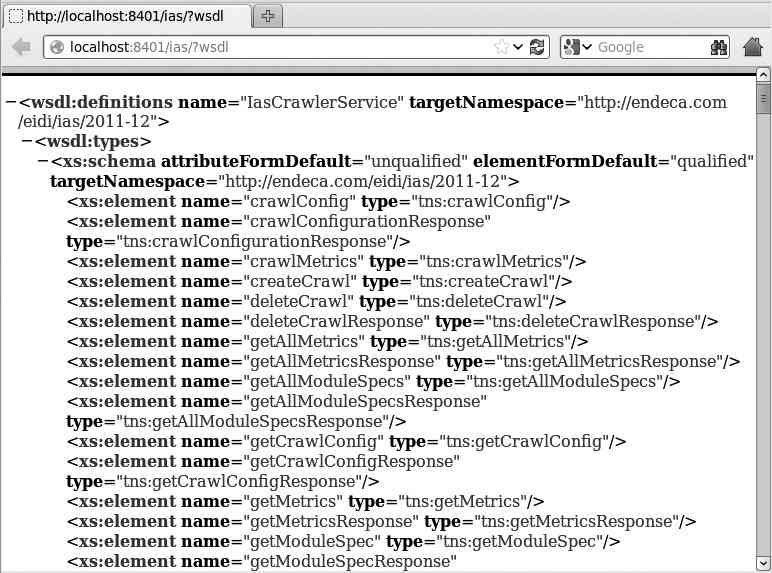

For Endeca 3.1, <version> in this line is 3.1.0. You can check the status of the service from a web browser on the server where Endeca IAS is running by accessing the status page on the port that you specified during the installation. With the default value for this port of 8401, the URL is http://localhost:8401/ias?wsdl. Figure 4 shows this in a browser.

FIGURE 4. Endeca IAS status page

This URL must be accessible externally to the Endeca IAS server to allow Integrator ETL access to the web service. Testing this with a web browser or with the Linux wget command is a good health check to perform on the Endeca IAS. If the URL is not accessible, be sure that no operating system firewalls are blocking the port used by the web service, in this case, port 8401.

Operations of the Endeca IAS server are rather simple, with only two services to manage. Any time the server is rebooted or restarted for any reason, the Jetty HTTP server must be restarted, followed by the Endeca IAS service. These can be easily scripted with Linux shell scripts.

Endeca IAS has no scheduling capability. Since the commands to Endeca IAS are all Linux command line–based, these commands can be put into shell scripts and run by any enterprise scheduler. Endeca ETL Server has the capability to schedule shell scripts, and this would provide an integrated scheduling capability. Endeca IAS crawls could be scheduled to run first, followed by Endeca ETL graphs.