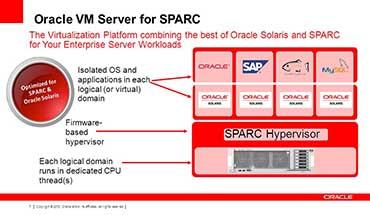

Oracle VM Server for SPARC (formerly called Logical Domains) is a virtualization technology that creates SPARC virtual machines, also called domains. It permits operation of virtual machines with less overhead than traditional designs by changing the way guests access CPU, memory, and I/O resources. It is an ideal way to consolidate multiple complete Oracle Solaris systems onto a modern powerful SPARC server.

Oracle VM Server for SPARC (formerly called Logical Domains) is a virtualization technology that creates SPARC virtual machines, also called domains. It permits operation of virtual machines with less overhead than traditional designs by changing the way guests access CPU, memory, and I/O resources. It is an ideal way to consolidate multiple complete Oracle Solaris systems onto a modern powerful SPARC server.

Oracle VM Server for SPARC is available on systems based on SPARC chip multithreading technology (CMT) processors. These processors include the Oracle SPARC M5-32, M6-32, M7, S7, T4, T5, and T7 servers and blades; older SPARC T3 servers; Sun SPARC Enterprise T5x20/T5x40 servers; Sun Blade T6320/T6340 server modules; and Fujitsu SPARC M10 servers. The chip technology is integral to Oracle VM Server for SPARC, which leverages the large number of CPU threads available on these servers. At this writing, as many as 4096 CPU threads can be supported in a single M7-16 server. Oracle VM Server for SPARC is available on supported SPARC processors without additional license or hardware costs.

Oracle VM Server for SPARC Features

Oracle VM Server for SPARC creates virtual machines, which are usually called domains. Every domain runs its own instance of Oracle Solaris 11 or Solaris 10, just as if each had its own separate physical server. Each domain also has the following resources:

- CPUs

- Memory

- Network devices

- Disks

- Console

- OpenBoot environment

- Virtual host bus adapters (starting with Oracle VM Server for SPARC 3.3)

- Cryptographic accelerators (optionally specified on older, pre-T4 servers)

Different Solaris update levels run at the same time on the same server without conflict. Each domain is independently defined, started, and stopped.

Domains are isolated from one another. Consequently, a failure in one domain—even a kernel panic or CPU thread failure—has no effect on other domains, just as would be the case for Solaris running on multiple servers.

Oracle Solaris and applications in a domain are highly compatible with Solaris running on a physical server. Solaris has long had a binary compatibility guarantee; this guarantee has been extended to domains, such that no distinction is made between running as a guest or on bare metal. Thus, Solaris functions essentially the same way in a domain as it does on a non-virtualized system.

CPUs in Oracle VM Server for SPARC

One unique feature of Oracle VM Server for SPARC is how it assigns CPU resources to domains. This architectural innovation is discussed in detail here.

Traditional hypervisors use software to time-slice physical CPUs among virtual machines. These hypervisors must intercept and emulate privileged instructions issued by guest VM operating systems that would change the shared physical machine’s state (such as interrupt masks or memory mapping), thereby violating the integrity of separation between guests. This complex and expensive process may be needed thousands of times per second as guest VMs issue instructions that would be “free” (executed in silicon) on a physical server, but are expensive (emulated in software) in a VM. Context switches between virtual machines can require hundreds or even thousands of clock cycles. Each context switch to a different virtual machine requires purging the cache and translation lookaside buffer (TLB) contents, because identical virtual memory addresses map to different physical locations. This process increases memory latency until caches become filled with fresh content, only to be discarded at the next time slice. Although these effects have been reduced on modern processors, they still carry significant overhead.

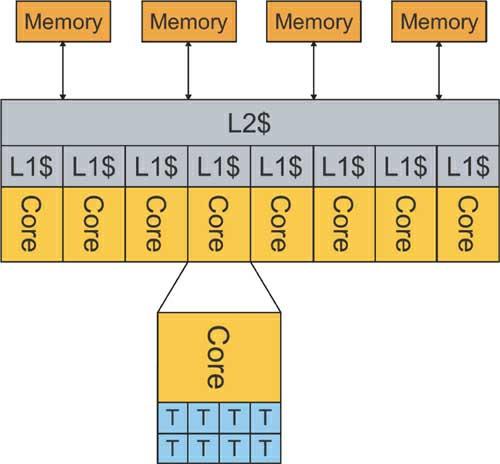

In contrast, Oracle VM Server for SPARC leverages SPARC chip multithreading processors to improve the system’s simplicity and reduce overhead. Modern SPARC processors provide many CPU threads (also called strands) on a single chip. SPARC T4 and UltraSPARC T2 and T2 Plus processors provide 8 cores with 8 threads per core, for a total of 64 threads per chip. SPARC T5 and T3 processors provide 16 cores with 8 threads per core, for a total of 128 threads per chip; the T5-8 has 8 sockets and a total of 1024 CPU threads. The M5-32 and M6-32 processors provide 6 or 12 cores per chip, respectively, with 8 threads per core, and they scale to 3072 threads per system. M7 and T7 servers are based on the M7 processor, which has 32 cores and 8 threads per core, scaling up to 1024 threads on the T7-4 and 4096 threads on the M7-16. From the perspective of Oracle Solaris, each thread is considered to be a CPU.

These systems are rich in CPU threads, which are allocated to domains for their exclusive use, rather than being doled out in a time-sliced manner, and which demonstrate native performance. The frequent context switches needed in traditional hypervisors are eliminated because each domain has dedicated hardware circuitry, and each can change its state—for example, by enabling or disabling interrupts—without causing a trap and emulation. Assigning CPUs to domains can save thousands of context switches per second, especially for workloads with high I/O activity. Context switching still occurs within a domain when Solaris dispatches different processes onto a CPU, but this behavior is identical to the way Solaris runs on a non-virtualized server. In other words, no overhead is added for virtualization.

SPARC systems also enhance use of the processor cache. All modern CPUs use fast on-chip or on-board cache memory that can be accessed in just a few clock cycles. If an instruction refers to data that is present in RAM but is not in the specific CPU’s cache, a cache miss occurs and the instruction is said to stall. In this scenario, the CPU must wait dozens or hundreds of clock cycles until the data is fetched from RAM so that the instruction can continue.

SPARC processors avoid this idle waiting time by switching execution to another CPU strand on the same core. This hardware context switch happens in a single clock cycle because each hardware strand has its own private hardware context. In this way, SPARC processors use cache miss time—time that is wasted (stall) on other processors—to continue doing useful work. This behavior reduces the effect of cache misses whether or not domains are in use, but Oracle VM Server for SPARC attempts to reduce cache misses by allocating CPU threads so that domains do not share per-core level 1 (L1) caches. This default behavior, which represents a best practice, can be ensured by explicitly allocating CPUs in units of whole cores. Actual performance benefits will depend on the system’s workload, and may be of minor consideration when CPU utilization is low.

Additionally, on T4 and later servers, Oracle VM Server for SPARC supports the Solaris “critical thread API,” in which a software thread is identified as being critical to an application’s performance and its performance is optimized. These software threads—such as the ones used for Java garbage collection or an Oracle Database log writer—are given all the cache and register resources of a core, and can execute instructions “out of order” so cache misses do not stall the pipeline. Consequently, critical application components may run dramatically faster. Oracle VM Server for SPARC flexibly provides better throughput performance for multiple threads while providing optimal performance for selected application components. Oracle software products increasingly make use of this powerful optimization.

Features and Implementation

Oracle VM Server for SPARC uses a very small hypervisor that resides in firmware, working with a Logical Domain Manager in the control domain (discussed later in this chapter) to assign CPUs, RAM locations, and I/O devices to each domain. Logical domain channels (LDCs) are used for communication both among domains and between domains and the hypervisor.

The hypervisor is kept as small as possible for simplicity and robustness. Many tasks traditionally performed within a hypervisor kernel, such as providing a management interface and performing I/O for guests, are offloaded to domains, as described in the next section.

This architecture has several benefits. Notably, a small hypervisor is easier to develop, manage, and deliver as part of a firmware solution embedded in the platform, and its tight focus improves security and reliability. This design adds resiliency by shifting functions from a monolithic hypervisor, which might potentially represent a single point of failure, to one or more privileged domains that can be deployed in parallel. As a result, Oracle VM Server for SPARC has resiliency options that are not available in older, traditional hypervisors. This design also makes it possible to leverage capabilities already available in Oracle Solaris, thereby providing access to existing infrastructure such as device drivers, file systems, features for management, reliability, performance, scale, diagnostics, development tools, and a large API set. It serves as an extremely effective alternative to developing all these features from scratch. Other modern hypervisors, such as the Xen hypervisor used in Oracle VM Server for x86, also use this architecture.

Domain Roles

Domains have different roles, and may be used for system infrastructure or applications:

The control domain is an administrative control point that runs Oracle Solaris and the Logical Domain Manager services. It has a privileged interface to the hypervisor, and can create, configure, start, stop, and destroy other domains.

Service domains provide virtual disk and network devices for guest domains. They are generally root I/O domains so as to have physical devices available as virtual devices. It is a best practice to use redundant service domains to provide resiliency against loss of an I/O path or service domain.

I/O domains have direct connections to physical I/O devices and use them without any intervening virtualization layer. They are often service domains that provide access to these devices, but may also use physical devices for their own applications. An I/O domain that owns a complete PCIe bus is said to be a root domain. The control domain is a root domain and is typically also a service domain.

Guest domains exclusively use virtual devices provided by service domains. Applications are typically run in guest domains.

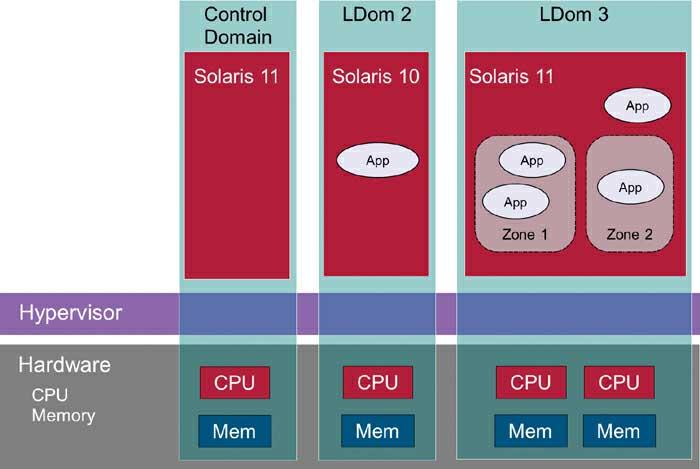

The virtinfo command displays the domain type. Specifically, for control domains, it displays the output Domain role: LDoms control I/O service root. For guest domains, it displays Domain role: LDoms guest. The domain structure and assignment of CPUs are shown in Figur 1.

Figure 4.1 Control and Guest Domains

The definition of a domain includes its name, the amount of memory and number of CPUs, its I/O devices, and policy settings. Domain definitions are constructed by using the command-line interface in the control domain, or by using the graphical interfaces provided by Oracle VM Manager and Oracle Enterprise Manager Ops Center.

Each server, or each physical domain on M-series processors, has exactly one control domain: the instance of Solaris that was first installed on the system. It runs Oracle VM Server for SPARC’s Logical Domain Manager services, which are accessed via a command-line interface provided by the ldm command or Oracle VM Manager, and may run agents that connect to Oracle VM Manager or Ops Center. The Logical Domain Manager includes a “constraint manager” that decides how to assign physical resources to satisfy the requirements (“constraints”) specified for each domain.

I/O domains have direct access to physical I/O devices, which may be Single-Root I/O Virtualization (SR-IOV) virtual functions (described later in this chapter), individual PCIe cards, or entire PCIe bus root complexes (in which case, the domain is called a “root domain”). The control domain is always an I/O domain, because it requires access to I/O buses and devices to boot up. There can be as many I/O domains as there are assignable physical devices. Applications that require native I/O performance can run in an I/O domain to avoid virtual I/O overhead. Alternatively, an I/O domain can be a service domain, in which case it runs virtual disk and virtual network services that provide virtual I/O devices to guest domains.

Guest domains rely on service domains for virtual disk and network devices and are the setting where applications typically run. At the time of this book’s writing, the maximum number of domains on a SPARC server or SPARC M-series physical domain (PDom) was 128, including control and service domains. As a general rule, it is recommended to run applications only in guest and I/O domains, and not in control and service domains.

A simple configuration consists of a single control domain that also acts as a service domain, and some number of guest domains. A configuration designed for availability would use redundant service domains to provide resiliency in case of a domain failure or reboot, or in case of the loss of a path to an I/O device.

Dynamic Reconfiguration

CPUs, RAM, and I/O devices can be dynamically added to or removed from a domain without requiring a reboot. Oracle Solaris running in a guest domain can immediately make use of a dynamically added CPU and memory as additional capacity; it can also handle the removal of all but one of its CPUs. Virtual disk and network resources can be added to or removed from a running domain, and a guest domain can make use of a newly added device without a reboot.

Virtual I/O

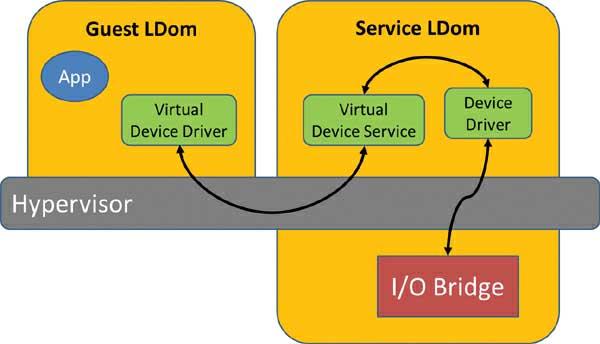

Oracle VM Server for SPARC abstracts underlying I/O resources to virtual I/O. It is not always possible or desirable to give each domain direct and exclusive use of an I/O bus or device, as this arrangement could increase the costs of both devices and cabling for a system. As an alternative, a virtual I/O (VIO) infrastructure provides shared access and greater flexibility.

Virtual network and disk I/O is provided by service domains, which proxy virtual I/O requests from guest domains to physical devices. Service domains run Solaris and own PCIe buses connected to the physical network and disk devices; thus they are also root domains. The control domain is typically a service domain as well, as it must have I/O abilities and there is no reason not to use that functionality.

The system administrator uses the ldm add–vswitch and ldm add–vds commands to define virtual network switches and virtual disk services, respectively. These services are then used to provide virtual network and disk devices to guest domains, and forward guest I/O operations to physical I/O devices used as “back-ends.” In a guest domain, Solaris uses virtual network and virtual disk device drivers that perform I/O by sending I/O requests to service domains. The service domain then processes the I/O operation and sends back the necessary status information when the I/O operation is complete.

The addition of device drivers that communicate with service domains instead of performing physical I/O is one of the ways in which Solaris has been modified to run in a logical domain. Communication is done via logical domain channels provided by the hypervisor. These LDCs provide communications channels between guests, and an API for sending messages that contain service requests and responses. The number of virtual devices in a domain is limited to the number of LDC endpoints that domain can have. SPARC T4, T5, T7, M5, M6, and M7 systems can have up to 1984 LDC endpoints per domain. Older T2 systems are limited to 512 LDC endpoints per domain, while T2+ and T3 systems can have up to 768 LDC endpoints for each domain.

Virtual I/O requires some degree of overhead, but it now approaches native I/O performance for appropriately configured service domains and back-ends. In practice, service domains with sufficient CPU and memory can drive guest I/O loads, and virtual disks can be based on physical disk volumes (LUNs) or slices. Figure 4.2 shows the relationship between guest and service domains and the path of I/O requests and responses.

Figure 2 Service Domains Provide Virtual I/O

Physical I/O

Virtual I/O is the most flexible choice for most domains, and its performance is very close to “bare metal” performance. Physical I/O, however, may be used for applications that need the highest I/O performance, or for device types such as tape that are not supported by virtual I/O.

As mentioned previously, a service domain is almost always an I/O domain (or it would not have devices to serve out), but an I/O domain may not be a service domain: It might use its own I/O devices to achieve native I/O performance unmediated by any service layer. As an example, such a deployment pattern is used with Oracle SuperCluster, which is optimized for high performance.

The physical I/O devices assigned to a domain are one of the following types:

- PCIe root complex. The domain is assigned an entire PCIe bus, and natively accesses all the devices attached to it. The domain is called a “root domain.”

- Single Root I/O Virtualization (SR-IOV) “virtual function” (VF) device. SR-IOV cards can present as multiple virtual functions, which can be individually assigned to a domain. An SR-IOV–capable card can have 8, 16, 32, or 64 separate virtual function devices, depending on the specific card. SPARC servers have built-in Ethernet SR-IOV devices, and Oracle VM Server for SPARC supports SR-IOV for Ethernet, InfiniBand, and FibreChannel. Qualified cards are listed in the Oracle VM Server for SPARC Release Notes.

- Direct I/O (DIO). This type is similar to SR-IOV, except that a device in a PCIe slot is presented directly to the domain—one card results in one device for one domain. SR-IOV is preferred to direct I/O, since it provides additional flexibility. Direct I/O is not supported on T7 and M7 servers. As with SR-IOV, qualified cards are listed in the Oracle VM Server for SPARC Release Notes.

To create an I/O domain, you first identify the physical I/O device to give to the domain, which depends on the server model and the resources to be assigned, and then issue the ldm add-io command to assign the device. Depending on the server and previous device assignments, it may be necessary to first issue an ldm rm-io command to remove the device from the control domain, which initially owns all system resources.

Physical devices are used by Solaris exactly as they would be in a non-virtualized environment. That is, they have the same device drivers, device names, behavior, and performance as if Solaris was not running in a domain at all.

There are some limitations to use of physical I/O. Notably, a server has only a given number of devices that can be assigned to domains. That number is smaller than the number of virtual I/O devices that can potentially be defined, since virtual I/O makes it possible to share physical network and disk devices. Also, I/O domains cannot be live-migrated from one server to another (see the discussion of live migration later in this chapter). Nevertheless, physical I/O can be the right answer for I/O-intensive applications.

Domain Configuration and Resources

The logical domains technology provides for flexible assignment of hardware resources to domains, with options for specifying physical resources for a corresponding virtual resource. This section details how domain resources are defined and controlled.

The Oracle VM Server for SPARC administrator uses the ldm command to create domains and specify their resources—the amount of RAM, the number of CPUs, and so forth. These parameters are sometimes referred to as domain constraints. The ldm command is also used to set domain properties, such as whether the domain should boot its OS when started.

A domain is said to be inactive until resources are bound to it by the ldm bind command. When this command is issued, the system selects the physical resources needed to satisfy the domain’s constraints and associates them with the domain. For example, if a domain requires 32 CPUs, the domain manager selects 32 CPUs from the set of online and unassigned CPUs on the system and gives them to the domain. The domain is then bound and can be started by the ldm start command. The ldm start command is equivalent to powering on a physical server. The ldm stop command stops a domain, either “gracefully,” by telling the domain to shut itself down, or forcibly (-f option), with the equivalent of a server power-off.

If the server does not have enough available resources to satisfy the server’s constraints, the bind will fail. However, the sum of the constraints of all domains can exceed the physical resources on the server. For example, you could define 20 domains, each of which requires 8 CPUs and 4 GB of RAM on a machine with 64 CPUs and 64 GB of RAM. Only domains whose constraints are met can be bound and started. In this case, the first 8 domains could be bound. Additional domains can be defined for occasional or emergency purposes, such as a disaster recovery domain that is defined on a server normally used for testing.

CPUs

Each domain is assigned exclusive use of a number of CPUs, which are also called threads, strands, or virtual CPUs (vCPUs). Current Oracle SPARC servers have 8 CPU threads per core, with up to 32 cores per chip. The number of chips (also called sockets) depends on the server model.

Virtual CPUs can be assigned individually or in units of entire cores. They should be assigned on core boundaries to prevent “false cache sharing,” which can reduce performance if multiple domains share a core and compete for the same L1 cache. The default behavior of Oracle VM Server for SPARC is to “do the right thing” and allocate different cores to domains whenever possible, even for sub-core allocations.

The best practice is to allocate virtual CPUs in whole-core units instead of on CPU thread units. The commands ldm set-core 2 mydomain and ldm set-vcpu 16 mydomain both assign 2 CPU cores with a total of 16 threads, but the first command requires that they be allocated entire cores, while the second command means that those resources could potentially be spread over more than 2 cores, which might also be shared with other domains. Allocating individual vCPUs in multiples of 8 also ensures allocation on core boundaries.

Figure 3 is a simplified diagram of the threads, cores, and caches in a SPARC chip.

Figure 3 SPARC Cores, Threads, and Caches

Whole-core allocation is recommended for domains with substantial CPU load. This technique is also a prerequisite for using “hard partition licensing,” in which the domain is defined to have a maximum number of CPU cores (ldm set-domain max-cores=N mydomain). When this type of licensing is used, Oracle licensed products in the domain need to be licensed only for the maximum number of cores in the domain, rather than for the entire server.

Allocation of an entire core may be overkill for light workloads. System administrators should not excessively worry when defining domains to accommodate the light CPU requirements needed to consolidate small, old, or low-utilization servers; they should just allocate a partial core’s threads if that is all that is needed. Alternatively, multiple Oracle Solaris Zones can be consolidated within a logical domain. This is a popular method for ensuring highly granular resource allocation.

On servers with multiple CPU sockets, the Logical Domain Manager attempts to assign CPU cores and memory from the same server socket to avoid NUMA (non-uniform memory access) latency. This practice reduces latency between CPUs and memory.

Oracle Solaris commands such as vmstat and mpstat can be used within the domain to monitor its CPU utilization, just as they are used on a dedicated server. The ldm list command can be used in the control domain to display each domain’s CPU utilization.

The number of CPUs in a domain can be dynamically and nondisruptively changed while the domain is running. Changes to the number of vCPUs in a domain take effect immediately. The Dynamic Resource Manager feature of Oracle VM Server for SPARC can be used to automatically add or remove CPUs based on a domain’s CPU utilization: It adds CPUs if utilization is higher than a specified threshold value, and it removes CPUs if utilization is lower than a threshold so they can be assigned to other domains that might benefit from their capacity.

Virtual Network Devices

Guest domains have one or more virtual network devices connected to virtual Layer 2 network switches provided by service domains. From the guest domain’s perspective, virtual network interfaces are named vnetN, where N is an integer starting from 0 for the first virtual network device. The command ifconfig -a or dladm show-phys is used to display the network interface vnet0, vnet1, and so on, rather than real device names like ixgbe0. Virtual network devices can be assigned static or dynamic IP addresses, just like physical devices.

Network Connectivity and Resiliency

Every virtual network device is connected to a virtual switch exported by a service domain. The virtual switch uses the service domain’s physical network device or link aggregation as a back-end. Network traffic from the guest domain to the physical network proceeds from the guest’s virtual network device to the virtual switch, and then over the back-end network interface or aggregation. If the back-end is a link aggregation, virtual network traffic can benefit from the bandwidth, failover, and load-balancing capabilities provided in the IEEE 802.3ad standard.

A domain’s virtual network devices can be on different virtual switches so as to connect the domain to multiple networks, provide increased availability using IPMP (IP Multipathing), or increase the available bandwidth. IPMP can be used with either probe-based or link-based failure detection. To use link-based detection, specify linkprop=phys-state with the ldm add-vnet commands used to define the virtual devices. IPMP configuration is performed in the guest domain and is otherwise the same as on physical servers.

By default, network traffic between domains on the same virtual switch does not travel to the virtual switch, service domain, or physical network. Instead, it relies on a fast memory-to-memory transfer between source and destination domains. This approach reduces both latency and load on the service domain, albeit at the expense of extra LDCs between each virtual network device on the virtual switch. If a domain has a large virtual I/O configuration, then LDCs can be conserved by setting inter-vnet-link=off on the virtual network device. In this case, interdomain network traffic goes through the virtual switch (but not the physical network), instead of directly between domains.

Virtual network devices can be configured to exploit features such as jumbo frames, VLAN tagging, and RFC 5517 PVLANs. Virtual network devices can have bandwidth controls (ldm set-vnet maxbw=200M net0 mydomain) to prevent domains from monopolizing bandwidth. Network devices can be assigned an IEEE 802.1p class of service, and can be protected from MAC or IP spoofing.

MAC Addresses

Every virtual network device has one or more MAC addresses. MAC addresses can be assigned manually or automatically from the reserved address range of 00:14:4F:F8:00:00 to 00:14:4F:FF:FF:FF. The bottom half of this address range is used for automatic assignments; the other 256,000 addresses can be used for manual assignment.

A virtual network device may need more than one MAC address. Solaris 11 network virtualization permits creation of virtual NICs (vNICs) that can be used for “exclusive IP” Solaris zones that manage their own network device properties. This scheme is supported by defining virtual network devices with an alt-mac-addrs setting to allocate multiple MAC addresses—a feature sometimes called “vNICs on vNets.”

The Logical Domain Manager detects duplicate MAC address by sending multicast messages with the address it wants to assign, and listening for a response from another machine’s Logical Domain Manager saying that address is in use. If such a message comes back, it randomly picks another address and tries again.

Virtual Disk

Service domains can have virtual disk services that export virtual block devices to guest domains. Virtual disks are based on back-end disk resources, which may consist of physical disks, disk slices, volumes, or files residing in NFS, ZFS, or UFS file systems. These resources could include any of the following:

- A physical block device (a disk or LUN, including iSCSI), such as /dev/dsk/c1t48d0s2

- A slice of a physical device or LUN, such as /dev/dsk/c1t48d0s0

- A disk image file residing in a file system mounted to the service domain such as /path/to/filename

- A ZFS volume; the command zfs create –V 100m ldoms/domain/test/zdisk0 creates the back-end /dev/zvol/dsk/ldoms/domain/test/zdisk0

- A volume created by Solaris Volume Manager (SVM) or Veritas Volume Manager (VxVM)

- A CD ROM/DVD or a file containing an ISO image

Back-ends based on physical disks, LUNs, or disk slices provide the best performance. There is no difference between defining them as /dev/dsk or /dev/rdsk devices—that is, back-ends created through both of these techniques are treated identically. Virtual disks specifying an entire disk should use the “s2” slice; individual slices are allocated by using other slice numbers.

Virtual disks based on files in the service domain are convenient to use: They can be easily copied, backed up, and, when using ZFS, cloned from a snapshot. This approach provides flexibility, but has less performance than physical disk back-ends.

Files in an NFS mount can be accessed from multiple servers, though, as with LUNs, they should not be accessed from different hosts at the same time. Different kinds of disk back-ends can be used in the same domain: The system volume for a domain can use a ZFS file system back-end, while disks used for databases or other I/O intensive applications can use physical disks.

Virtual disks on a local file system, such as ZFS, cannot be used by multiple hosts at the same time, so they will not work with live migration. If you want to perform live migration, you must use virtual disk back-ends that can be accessed by multiple hosts. Those options include NFS, iSCSI, or FibreChannel LUNs presented to multiple servers.

Disk Device Resiliency

Redundancy can be provided by using virtual disk multipath groups (mpgroups), in which the same virtual disk back-end is presented to the guest by different service domains in active/passive pairs. This scheme provides fault tolerance in the event of service domain failure or loss of a physical access path. If a service domain is rebooted or its path fails, then I/O proceeds down the other member of the mpgroup. The back-end storage can be a LUN accessed by FC-AL adapters attached to each service domain, or even NFS mounted to both service domains.

The commands in Listing 1 show how to define a virtual disk served by a control domain and an alternative service domain. The ldm list-bindings and ldm set-vdisk commands can be used to display and set which of the mpgroup paths is the active path.

# ldm add-vdsdev mpgroup=foo \

/path-to-backend-from-primary/domain ha-disk@primary-vds0

# ldm add-vdsdev mpgroup=foo \

/path-to-backend-from-alternate/domain ha-disk@alternate-vds0

# ldm add-vdisk ha-disk ha-disk@primary-vds0 myguest

This approach is the recommended and commonly used method for providing path and service domain resiliency. The administrator can display which path is active by giving the ldm list-bindings command, and can use the ldm set-vdisk command to change which member of the mpgroup is the active path. In this way, multipath groups can be used to balance loads over service domains and host bus adapter (HBA) paths.

Multipathing can also be provided within a single I/O domain with Solaris multiplexed I/O (MPXIO), by ensuring that the domain has multiple paths to the same device—for example, two FC-AL HBAs to the same SAN array. You enable MPxIO in the control or service domain by running the command stmsboot -e. The result of this command is a single, but redundant path to the same device. The single device is then configured into the virtual disk service, which provides path resiliency within the same service domain. This strategy, which can be combined with mpgroups to provide redundancy in case a service domain fails, is a frequently used production deployment.

Virtual disk redundancy can also be established by providing multiple virtual disks, from different service domains, and arranging them in a redundant ZFS mirrored or RAIDZ pool within the guest. The virtual disk devices must be specified with the timeout parameter (ldm set-vdisk timeout=seconds diskname domain). If any of the disk back-ends fail (i.e., path, service domain, or media), then the fault is propagated to the guest domain; the guest domain marks the virtual disk as unavailable in the ZFS pool, but the ZFS pool continues in operation. When the defect is repaired, the system administrator can indicate that the device is available again.

Insulation from media failure can be provided by using virtual disk back-ends in a redundant ZFS pool in the service domain, with mirror or RAIDZ redundancy. This technique can also be used with iSCSI LUNs or NFS-based files exported from an Oracle ZFS Storage Appliance (ZFSSA) configured with mirrored or RAIDZ, which has the advantage of permitting live migration since the storage is accessed via the network. These options offer resiliency in case of a path failure to a device, and can be combined with mpgroups to provide resiliency against service domain outage.

Virtual HBA

Oracle VM Server for SPARC 3.3, a feature delivered with Oracle Solaris 11.3, added a new form of virtual I/O by introducing virtual SCSI host bus adapters that support the Sun Common SCSI Architecture (SCSA) interface.

The virtual I/O design described earlier is flexible and provides good performance, but it does not support devices such as tape, and it does not support some native Solaris features, such as Solaris MultiPathing disk I/O (MPxIO) for active/active path management. Virtual HBA (vHBA) eliminates these restrictions.

The logical domain administrator defines virtual SANs on a physical SAN (ldm add-vsan), and then defines guest virtual HBAs on the virtual SAN (ldm add-vhba). Guests see all the devices on the virtual HBA and SAN, just as with a non-virtualized system. A single command gives access to all the devices, instead of two commands (ldm add-vdsdev, ldm add-vdisk) being required for each virtual disk.

Virtual HBAs can be defined on any supported SPARC server using domains running Solaris 11.3 or later. The administrator lists the physical HBAs on the service domain, defines a virtual SAN on the domain with the guest’s devices, and then creates a virtual HBA for the guest. This can be done dynamically while the guest is running. Listing 4.2 shows creation of a virtual SAN and a virtual HBA.

Listing 2 Define a Virtual HBA

# ldm ls-hba -d primary

NAME VSAN

---- ----

...snip...

MB/RISER2/PCIE2/HBA0,1/PORT0,0

...snip...

# ldm add-vsan MB/RISER2/PCIE2/HBA0,1/PORT0,0 my-vsan primary MB/RISER2/PCIE2/HBA0,1/9PORT0,0 resolved to device: /pci@0/pci@0/pci@9/SUNW,emlxs@0,1/fp@0,0

# ldm add-vhba my-vhba my-vsan myguest

The guest domain now has a host bus adapter. It sees the devices on this HBA just as if it had exclusive access to a physical HBA in a non-virtualized environment, with actual device names and features, as shown in Listing 3.

Listing 3 Guest Domain Sees Devices on Virtual HBA

my-guest # format

Searching for disks...done

AVAILABLE DISK SELECTIONS:

0. c0t600144F0EDE50676000055A81BB2001Ad0 <SUN-ZFS 7420-1.0-36.00GB>

/scsi_vhci/disk@g600144f0ede50676000055a81bb2001a

1. c0t600144F0EDE50676000055A815160019d0 <SUN-ZFS 7420-1.0 cyl 778 alt 2 hd 254 sec 254>

/scsi_vhci/disk@g600144f0ede50676000055a815160019

2. c1d0 <SUN-DiskImage-40GB cyl 1135 alt 2 hd 96 sec 768>

/virtual-devices@100/channel-devices@200/disk@0

Specify disk (enter its number): ^C

Virtual HBA is a major advance in Oracle VM Server for SPARC capability. It provides virtual I/O with full Solaris driver capabilities and adds virtual I/O for tape and other devices. Moreover, vHBA consumes only one LDC for all the devices on the HBA, instead of one LDC per device, which eliminates constraints on disk device scalability. The original virtual disk and physical disk architectures are not eliminated or deprecated, and can be used concurrently with vHBA.

Console and OpenBoot

Every domain has a console, which is provided by a virtual console concentrator (vcc). The virtual console concentrator is usually delivered by the control domain, which then runs the Virtual Network Terminal Server daemon (vntsd) service.

By default, the daemon listens for localhost connections using the Telnet protocol, with a different port number assigned for each domain. A guest domain operator connecting to a domain’s console first logs into the control domain via the ssh command so that no passwords are transmitted in cleartext over the network; the telnet command can then be used to connect to the console. Domain console authorization can be implemented to restrict which users can connect to a domain’s console. Normally, only system and guest domain operators should have login access to a control domain.

Both Oracle VM Manager and Oracle Enterprise Manager Ops Center provide a graphical user interface for managing systems. The GUI includes a point-and-click method for launching virtual console sessions, with no need to log into the control domain.

Guest console traffic is logged on the virtual console service domain in text files under /var/log/vntsd/domainname. These files, which are readable only by root, provide an audit trail of guest domain console activity. Such logging is available and on by default when the service domain runs Solaris 11 or later, but can be enabled or disabled by issuing the command ldm set-vcons=on|off domainname.

Cryptographic Accelerator

SPARC processors are equipped with on-chip hardware cryptographic accelerators that dramatically speed up cryptographic operations. Such accelerators improve security by reducing the CPU consumption devoted to encrypted transmissions, making it feasible to encrypt network transactions while maintaining performance. Live migration automatically leverages cryptographic acceleration.

This feature does not require administrative effort to activate on current servers. On older SPARC servers (T3 and earlier), each CPU core has a cryptographic unit that the administrator adds to domains with the command ldm set-crypto N domain, where N is the number of cores.

Memory

Oracle VM Server for SPARC dedicates real memory to each domain, instead of oversubscribing guest memory and swapping between RAM and disk, as some hypervisors do. On the downside, this approach limits the number and memory size of domains running on a single server to the amount that fits in RAM. On the upside, it eliminates problems such as thrashing and double paging, which can harm performance when hypervisors run virtual machines in virtual memory environments.

The memory requirements of a domain running the Oracle Solaris OS are the same as when running Solaris on a physical machine. In other words, if a workload needs 8 GB of RAM to run efficiently on a dedicated server, it will need the same amount when running in a domain. Memory can be dynamically added to and removed from a running domain without service interruption. Removing memory requires time to evacuate pages, which may even cause guest domain swapping if the memory load is high. Note that kernel data might be allocated to pages, which prevents them from being removed from the domain.