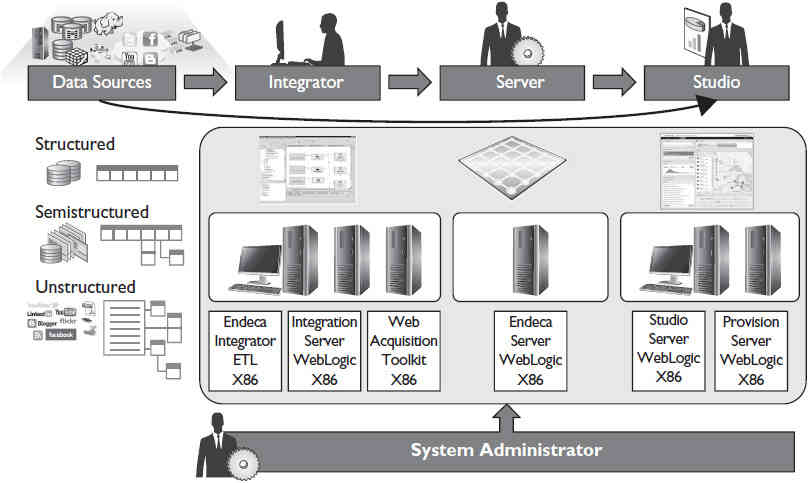

Endeca is a user-centric product; its infrastructure is sophisticated and of enterprise scale. Endeca Server sits at the center of this complex ecosystem. Refer to Figure 1 for an overview of the Endeca ecosystem. Endeca Integrator ETL pushes data sets into Endeca Server, and Endeca Server makes them available to Endeca Studio.

Endeca is a user-centric product; its infrastructure is sophisticated and of enterprise scale. Endeca Server sits at the center of this complex ecosystem. Refer to Figure 1 for an overview of the Endeca ecosystem. Endeca Integrator ETL pushes data sets into Endeca Server, and Endeca Server makes them available to Endeca Studio.

FIGURE 1. Overview of the Endeca ecosystem

Hardware

Before we delve into the approach for selecting hardware, let’s discuss some realities of hardware selection. This often is determined by capacity needs for the immediate future. This approach prevents purchasing more server hardware and product licenses than are needed. However, this approach can be short-sighted when taking into account the normal productive life span of enterprise server hardware, which is three to five years. As you begin the hardware selection process for Endeca Server, assume that its usage will be twice or three times what is projected for the immediate future. This approach allows for growth and ensures that the Endeca Server installation will be ready when users request new projects or uses for Endeca.

Endeca Server is a Java application that is designed for 64-bit Intel x86 processor servers. Server hardware selection involves selecting sufficient processor cores, server memory, and storage to accommodate the anticipated workload. The section that follows describes an approach to capturing workload information and estimating the number of processor cores required. Keep in mind you should use the information presented as a general guide, and if you are considering the purchase of Endeca Server and hardware, you should consult with Oracle engineers to arrive at a more precise estimate.

Workload Analysis and Processor Core Count

Processor core count determines the number of threads of execution that can occur concurrently on a server, without latency. When the number of threads of execution exceeds the available CPU cores, the threads of execution must wait. Endeca Server is designed to limit the number of threads of execution it uses to avoid waits. In this blog, we introduced the Dgraph process of Endeca, the main computational module that provides the features of Endeca Server. Dgraph processes are associated with data domains and have parameters stored in data domain profiles. These parameters affect how the Dgraph process behaves. The parameter --num-compute-threads specifies the number of computational threads in the Dgraph threading pool. This value is usually set to the number of processor cores available on the server. With this parameter, the number of threads possible for the Dgraph process is directly linked to the number of processor cores on the server, and this dictates the throughput of Endeca Server and the amount of workload the server can process. Hence, in selecting the number of processor cores an Endeca Server should have, it is necessary to estimate workload. For Endeca Server, these factors determine workload:

- Number of records to be processed

- Number of concurrent users

- Query complexity

- Number of queries per Endeca Studio page

Determining the number of records to be processed requires that the sources to be used for Endeca Server are known and understood. An exact number is not required; you need just a rough idea. To estimate the number of records, you can use a simple approach: Determine the total number of records in the source tables that are structured and semistructured. The number of concurrent users is simply the total number of users you expect to be using Endeca at the same time. This is not the same as the number of users who have logins and are capable of logging in to Endeca. Note that this is not necessarily the number of named user licenses owned. Also, query complexity has a significant impact on the performance of Endeca Server and is determined by how the server is used. For the purposes of analyzing hardware requirements, we will use these three levels of complexity of query usage:

- Low complexity usage The majority server usage is through Endeca Studio, with few queries.

- Medium complexity usage This level consists of Endeca Studio usage and simple EQL queries, with no more than two defines per query.

- High complexity usage This level consists of usage that includes complex, multistep EQL queries.

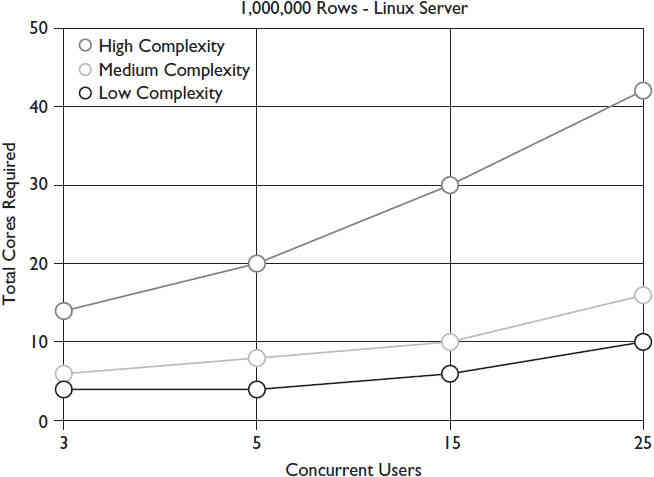

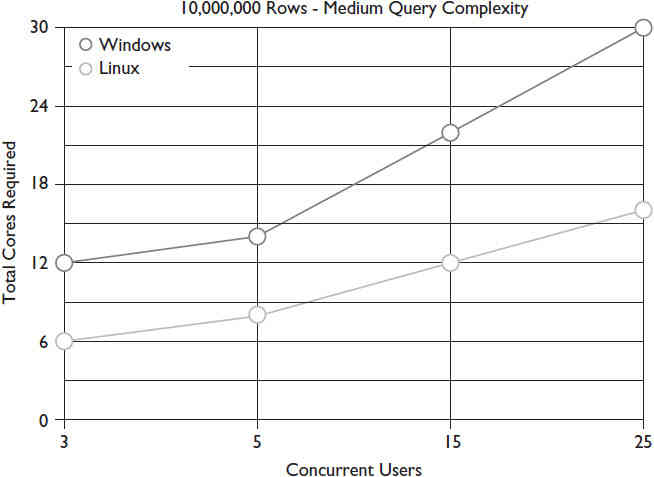

The next three figures present charts that depict processor cores required for Endeca Server, using concurrent users, number of records to be processed, and query complexity as variables. These charts are based on models that are used to forecast workload. Figure 2 shows the estimated core requirements for an Endeca Server with 1 million rows of data on a Linux server. The horizontal axis shows the number of concurrent users, and the vertical axis shows the number of x86 processor cores required.

FIGURE 2. Cores vs. concurrent users for a 1,000,000-row Endeca Server

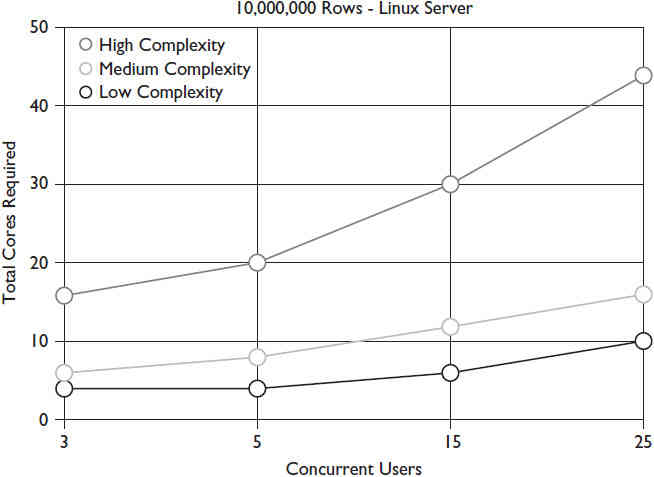

Figure 3 shows the estimated core requirements for an Endeca Server with 10 million rows of data on a Linux server.

FIGURE 3. Cores vs. concurrent users for a 10,000,000-row Endeca Server

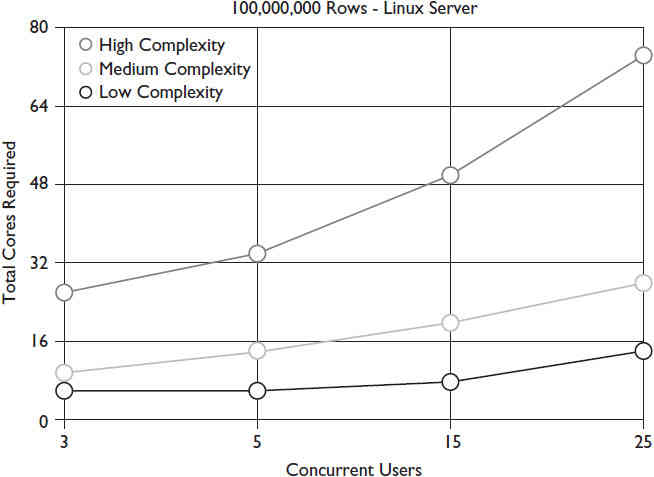

Figure 4 shows the estimated core requirements for an Endeca Server with 100 million rows of data on a Linux server.

FIGURE 4. Cores vs. concurrent users for a 100,000,000-row Endeca Server

Based on the information shown, you can arrive at the following conclusions regarding hardware selection:

- Complex query installations should limit the number of concurrent users to avoid the need for a huge number of processor cores. If a higher number of concurrent users is expected, then a large multicore server or clustering must be used.

- Installations that will have low or medium complexity queries can get by with as few as 8 or 12 core servers, so long as concurrent users remains low, on up to 10 million rows of data.

Server Memory

Besides processor cores, server memory is one of the most critical resources for enterprise servers. Part of Endeca’s high performance is due to its in-memory performance, and given this, Endeca Server must be deployed on a server with ample memory. Memory has become economically priced in the last three to five years, so there is really no reason not to purchase servers with as much memory as possible. Seldom do systems administrators and managers regret purchasing servers with too much memory, but they always regret purchasing systems with too little memory. Oracle does not provide any published guidelines

regarding memory requirements, other than a minimum of 8GB. For estimating and budget purposes, you can use the following formula:

- Low complexity queries: 8GB + processor cores × 4GB

- Medium and high complexity queries: 8GB + processor cores × 8GB

Also, be aware that if you plan on using data enrichment plug-ins, such as Term Extraction, in Endeca Studio, you should plan on adding an extra 10GB per each instance of data enrichment plug-in that is expected to run concurrently in the data domain. If users in the data domain plan to run Term Extraction, for each such process, additional memory should be provisioned on all Endeca Server machines hosting this data domain.

Server Storage

Endeca Server storage must be high performance; by following these guidelines, you will ensure that storage performance is never an issue with your Endeca Server installation. Internal, locally attached storage or SAN-based storage can be used. If SAN-based storage is used, then connectivity to the SAN-based storage should be via Fibre Channel. iSCSI should be used only for test environments and should not be used for production environments. Individual drive sizes should be kept small, not to exceed 147G per spindle. Only RAID 10 should be used; RAID 5 should never be used because of the overhead it imposes. Lastly, 15,000 RPM drives are preferred, but 10,000 RPM drives can also be used.

The rationale for these recommendations is in part because Endeca Server stores all the files for indexes in a location specified by the endeca-data-dir parameter in the Endeca. properties file. The indexes for all data domains created by Endeca Server will be stored in the directory specified by this parameter. Because of this, it is not possible to provide physically separate storage for data domains located on the same Endeca Server. When specifying storage for Endeca Server, you need to be mindful of this limitation and avoid procuring too few drives and drives with large sizes, such as greater than 147G. Ten 147G drives in a RAID 10 array will provide 735GB of storage and will be sufficient for most Endeca Server installations. Because 8 percent of all drives fail within their first two years of service, there is a disadvantage to purchasing excessive storage. Moreover, drive systems are relatively easy to expand when future needs arise.

Operating System

Now that we have covered the server hardware, let’s turn our attention to the operating system for Endeca Server. Endeca Server can be installed on two operating systems: Linux (Oracle Enterprise Linux or Red Hat Enterprise Linux) or Microsoft Windows. Between these two choices of operating systems, Linux will perform better than Microsoft Windows because Endeca Server has been optimized for Linux and processes a greater workload with fewer processor cores. Figure 5 illustrates the dramatic difference in performance between Linux and Windows.

FIGURE 5. Required cores for Linux versus Windows for 10,000,000 rows and medium query complexity

Besides the performance differences with Microsoft Windows, the logistical issues related to supporting this operating system can affect production environments. Security patches are issued on a weekly basis, and some require an outage. This is not preferred for a production data server. Windows also lacks the command-line scripting capability available in Linux, which is used by systems administrators to automate maintenance tasks.

Notes on Endeca Server on Linux

As mentioned, Endeca Server can be installed on either Oracle Enterprise Linux (OEL) or Red Hat Enterprise Linux (RHEL), version 5 or 6. Oracle strongly recommends installing version 6 of either OEL or RHEL to take advantage of control groups in version 6. Control groups, also called cgroups, are a kernel resource-controlling feature of the operating system. They provide a means to define and allocate the system resources to one or more specific processes, while also controlling the utilization of these resources at a high level. This ensures that the processes do not consume excessive resources. Hence, cgroups help avoid circumstances where the hosting machines run out of memory and must shut down because of their applications running on the servers taking over all available resources on the machine. A Linux shell script called setup_cgroups.sh is provided with Endeca Server and must be run to enable cgroups. Once enabled, the Endeca Server relies on cgroups to allocate resources to all the data domains it is hosting.

Endeca Server can use the Oracle Unbreakable Enterprise Kernel (UEK). The advantage of UEK is that zero-downtime updates are possible with Ksplice, a feature that must be licensed for each server. Ksplice enables customers to apply security and other critical fixes without rebooting. The zero-downtime capability should be considered for critical deployments of Endeca.

Application Server

Endeca Server is a Java application that must be deployed on an application server and requires Oracle WebLogic. WebLogic features an advanced, modern web-based user interface that makes installing Endeca Server easy. WebLogic has a library of dashboards for monitoring and also allows the creation of custom dashboards. We’ll cover these dashboards in the performance monitoring section.

Exalytics

One other option to consider for hardware for Endeca Server and other Endeca core components is Oracle Exalytics. Oracle Exalytics is an engineered system by Oracle targeted at business intelligence and enterprise performance management applications. Exalytics is designed for in-memory analytics and, as a result, ships with large amounts of RAM.

The Oracle Exalytics server is suitable for large deployments of Endeca Server. Exalytics comes in two configurations:

- Exalytics X3-4 features 40 cores, 2TB of RAM, 5.4TB of raw storage, and 2.4TB of PCIe flash storage

- Exalytics X5-8 features 128 cores, 4TB of RAM, 9.6TB of raw storage, and 6.4TB of PCIe flash storage

While Exalytics is designed for business intelligence and enterprise performance management applications, it is also certified by Oracle for Endeca. For more details on Oracle Exalytics, confer with Oracle technical resources to determine how Exalytics can be configured for your particular Endeca deployment.

Securing Endeca Server

Endeca Server has two basic mechanisms for providing security, discussed in the next two sections. In addition to these two built-in mechanisms, Endeca Server should be secured with standard network topography and access controls since most threats against Endeca Server will be over network communications.

Endeca Server SSL

Endeca Server uses Secure Sockets Layer (SSL) to enable secure communications with other Endeca components. Using SSL is optional and is a decision that must be made at the time Endeca Server is installed on WebLogic. Oracle recommends using SSL for all production installations of Endeca Server. The use of SSL is a decision that affects all further connections with Endeca Server: If SSL is selected, then connectivity between Endeca Studio, Endeca Integrator, and subcomponents must utilize SSL.

EQL Records Filters

Endeca Server EQL has one type of records filter, known as a DataSourceFilter, that limits the scope of records available to a user. This filter limits the scope of requests prior to the execution of a user EQL query and provides a type of filter for finegrained access. This filter is created by developers using the Endeca Integrator ETL.

Other Security Considerations

The server OS must be hardened to the greatest extent possible. We won’t go into the details of how this is done because security recommendations evolve as new threats emerge, so the best, most current guidelines are available from online sources. The Oracle Technology Network and the National Security Agency (NSA) web sites both have excellent guides to securing Linux and Windows operation systems.

Endeca Server Cluster

Endeca Server Cluster is an optional method of deploying Endeca Server and is a deployment of multiple Endeca Server instances on multiple servers (an instance being a single installation of Endeca Server). From a physical standpoint, Endeca Server Cluster must be installed on homogenous servers, meaning each server must be identical in terms of server make and model, CPU core count, and memory. We refer to these servers as hardware nodes. Endeca Server Cluster requires a clustered file system be available to all hardware nodes to host the indexes.

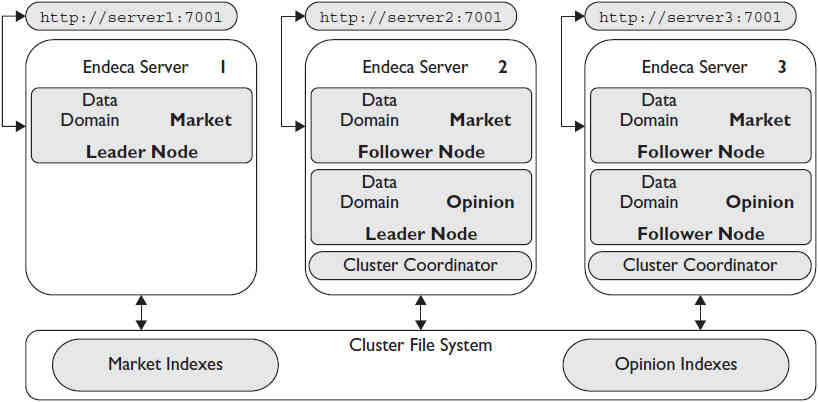

In Endeca Server Cluster, the primary processing entity is Dgraph nodes, which are Dgraph processes distributed across the hardware nodes that service query requests. Dgraph nodes that service query requests are known as follower nodes. With Endeca Server Cluster, only one Dgraph node can service write requests, and this node is known as the leader node. A service known as the Cluster Coordinator is installed with Endeca Server Cluster and, as its name implies, provides coordination and communication between cluster nodes. Figure 6 shows an example Endeca Server Cluster deployment.

Reviewing Figure 6, you can make the following observations that lead to more understanding of an Endeca Server Cluster:

FIGURE 6. Endeca Server Cluster example

- Data domains in Endeca Server Cluster are composed of data domain nodes serviced by the Dgraph nodes running on each hardware node.

- Multiple data domains can be hosted on Endeca Server Cluster. In this example, there are two data domains: market and opinion. Note that data domain opinion is not shown on Endeca Server 1. The reason for this is that the data domain profile for data domain opinion is not configured to run on Endeca Server 1. Since the leader node for data domain market is on Endeca Server 1, this would allow all the resources of this node to be dedicated to servicing the data domain market leader node.

- Each node has a unique hostname and port. Any of the URLs for the cluster can be used to query or write data to either data domain. Endeca Server Cluster routes all write requests to the leader nodes and balances query requests across follower nodes.

The clustered file system shown at the bottom of Figure 6 is an essential element of Endeca Server Cluster in that it provides storage for each of the data domain indexes and is accessible to all hardware nodes. Oracle recommends using Network File System (NFS) as the clustered file system for Endeca Server Cluster. NFS is often hosted on a Linux server, and if this option is chosen, a multimaster technology like HighlyAvailableNFS should be used to ensure you eliminate the NFS server as a single point of failure. HighlyAvailableNFS allows a backup server to recover current NFS activity should the primary NFS server fail.

The best option for providing the NFS clustered file system for Endeca Server Cluster is a Network Attached Storage (NAS) server and, in particular, the Oracle ZFS appliance. The ZFS appliance supports Fibre Channel connectivity and has a wide array of management features for backup and replication, all managed with an easy-to-use web interface.

Now that we have covered the major elements of Endeca Server Cluster, let’s examine two benefits not available in a nonclustered Endeca Server deployment. They are discussed in the next two sections.

High Availability

Most information technology service groups and organizations are required to operate under service level agreements (SLAs) requiring a minimum amount of uptime per month or year for enterprise systems. To meet the requirements of these uptime requirements on enterprise server products, clustering technologies are often deployed. When clustering technologies are deployed across multiple servers, this lessens the risk of service loss due to hardware failure. With clustering technologies, the loss of a singleserver because of hardware failure does not cause a loss of service. This capability is known as high availability, and here are a few key considerations regarding Endeca Server Cluster high availability:

If a leader node fails, the Cluster Coordinator assigns leader node functionality to one of the surviving follower nodes. If a follower node fails, Endeca Server Cluster no longer routes query requests to the failed follower node.

Cluster Coordinator must be running on three nodes to ensure Endeca Server Cluster is capable of monitoring and taking corrective action in the event of a node failure. If fewer than three nodes are running Cluster Coordinator, then the high availability capability is deprecated, and the cluster has increased availability. Increased availability is a term denoting more fault tolerance than a single-node installation, but not true high availability.

Horizontal Scaling

When an enterprise server’s key resources, processor cores, and memory are not capable of supporting its normal operations, a hardware upgrade is necessary. Usually, this involves procuring a new server with more processor cores and more memory. This type of upgrade is known as vertical scaling. Migrating an enterprise server to new hardware is one of the more complex and difficult tasks facing IT organizations. With clustered enterprise servers, like Endeca Server Cluster, adding processor cores does not require vertical scaling. Rather, a new server can be procured and becomes an additional hardware node running Endeca Server Cluster. This capability is known as horizontal scaling and is one of the most compelling reasons to deploy Endeca Server Cluster.

Load Balancers and Endeca Server

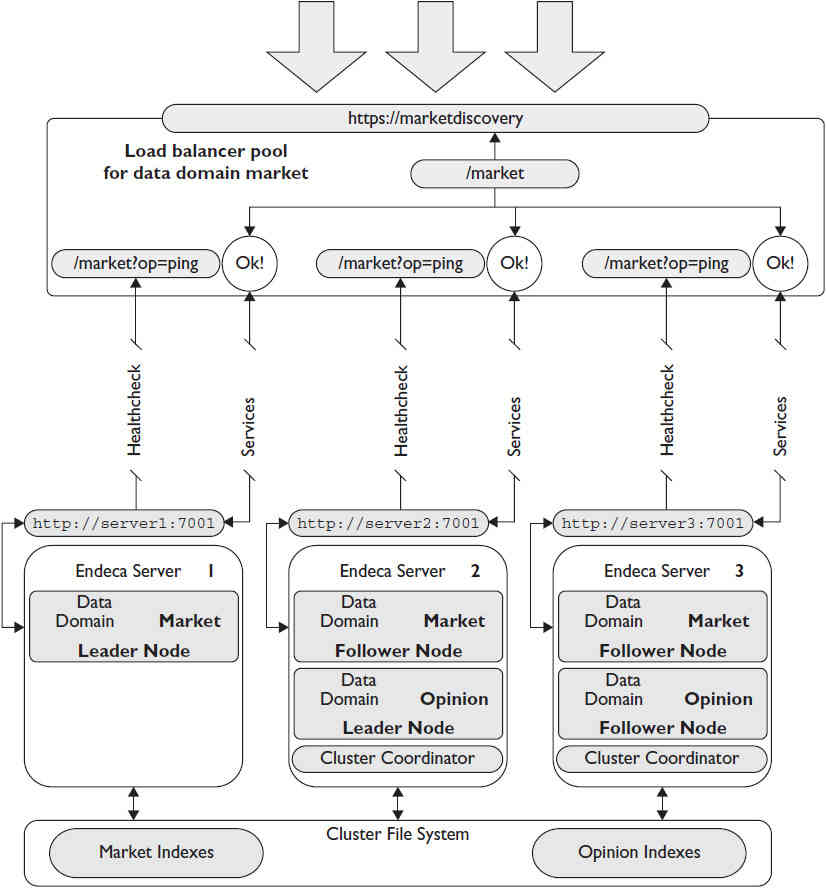

Most IT organizations use load balancers in their enterprise infrastructure because of the capabilities and features they provide and because organizations can easily add configurations to support the server. Load balancers are fabric-enabled devices that can support many applications, and a pool and a virtual IP address are the main components of a single configuration in a load balancer. The pool is a group of enterprise resources that are exposed to the load balancer in the form of URLs. In the case of Endeca Server, these URLs are composed of the hostname, port number, and domain name used to establish connectivity to a data domain. The virtual IP (VIP) address resides on the load balancer and is associated with a network domain name that is descriptive of the Endeca Server data domain. For example, for a data domain named market, you could choose the network domain name marketdatadomain.mycompany.com. Figure 7 shows an example of a load balancer deployed with Endeca Server Cluster and a network domain name for the data domain market.

FIGURE 7. Load balancer configuration for the data domain market

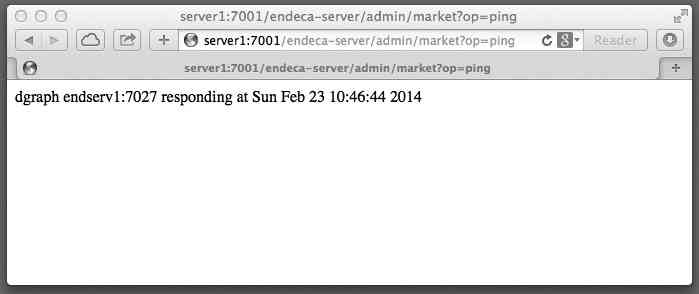

In this example, the ping administrative operation available for a domain name is used for the health check. Figure 8 shows a web browser with the heath check output. When the health check for a particular data domain node becomes unresponsive, the load balancer will stop routing requests to the failed data domain node.

FIGURE 8. Web browser with the health check output for the data domain market

Note that the URLs from each of the four Endeca Server Cluster nodes are HTTP, not HTTPS, meaning that we have not deployed SSL on this server. However, the VIP and network domain name provided by the load balancer is SSL. The reason for this is that load balancers can provide SSL to non-SSL sources, which eliminates the need to deploy SSL on Endeca Server. On the load balancer, an SSL certificate is deployed for the company URL, mycompany.com in this case, from a trusted certificate authority. In this example, the load balancer greatly simplifies the details of connections to Endeca Server Cluster. There is no need to worry about SSL certificates from Endeca Server; the URL used for connectivity is easy to remember and does not require an unusual port number. (The port number in this case is 443, which is the standard port number used for SSL connections.) This approach requires a pool and VIP be created for each data domain, so to provide load balancer support of the other data domain on this cluster, data domain opinion, you would need to create another VIP and the domain name opiniondatadomain.mycompany.com. The pool for opiniondatadomain.mycompany.com would not include the URL and health check for Endeca Server 1 since there is no data domain node on this server.

One final comment regarding the use of load balancers: If your IT organization already uses load balancers, then you should consider using them with all Endeca components to provide simplified URLs and SSL, even for nonclustered deployments.

Installing Endeca Server

The most up-to-date installation instructions for Endeca Server are available on the Oracle Technology Network web site. The installation files for Endeca Server are available for download from the Oracle software delivery cloud. The documentation for Endeca Server can also be downloaded from the Oracle software delivery cloud for those who prefer a local copy of documentation.

Be aware that in addition to downloading the Endeca Server installation, you will need to download the WebLogic installation bundle. The Java Development Kit (JDK), as well as the Oracle Application Development Runtime (ADR), must be installed on the server. The installation directions for Endeca Server are detailed but easy to follow; Endeca Server can easily be installed in one to two hours for a single-node installation.